With the increased pace of technological advancement in the digital world, businesses must keep up, or risk falling behind. The stakes are high, and so is the pressure to deliver innovative software development services. To meet this challenge, savvy companies are turning to more practical methods, such as DevOps, to stay ahead of the curve. DevOps makes scaling development teams workable among other perks.

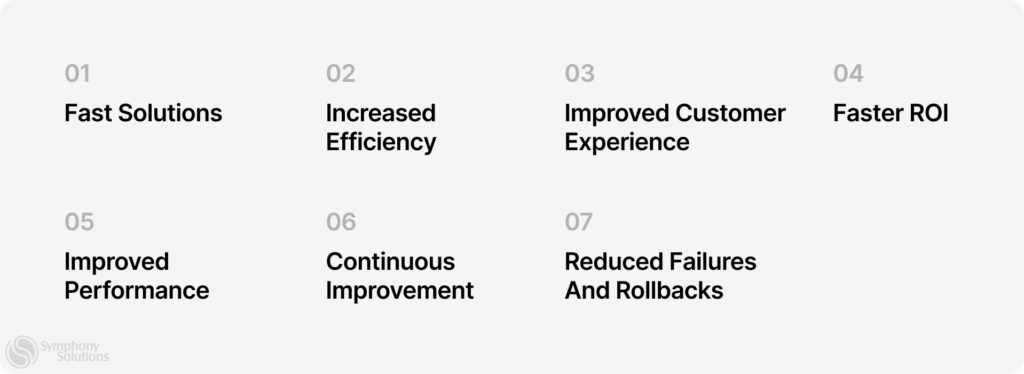

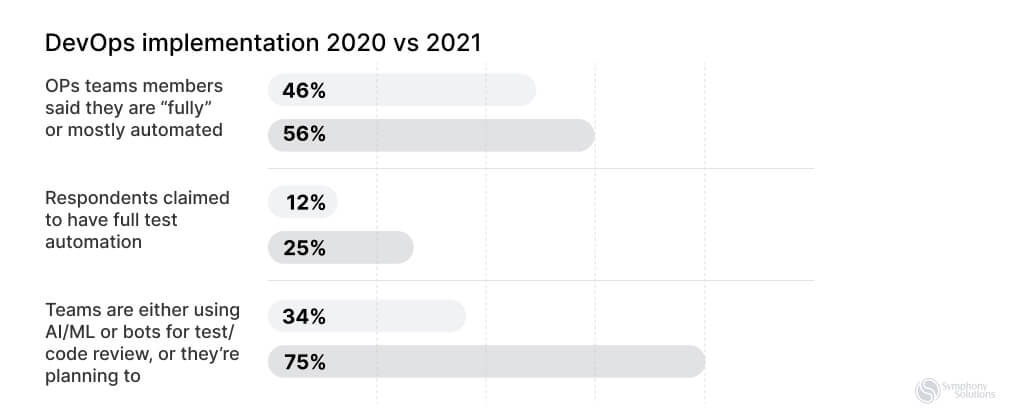

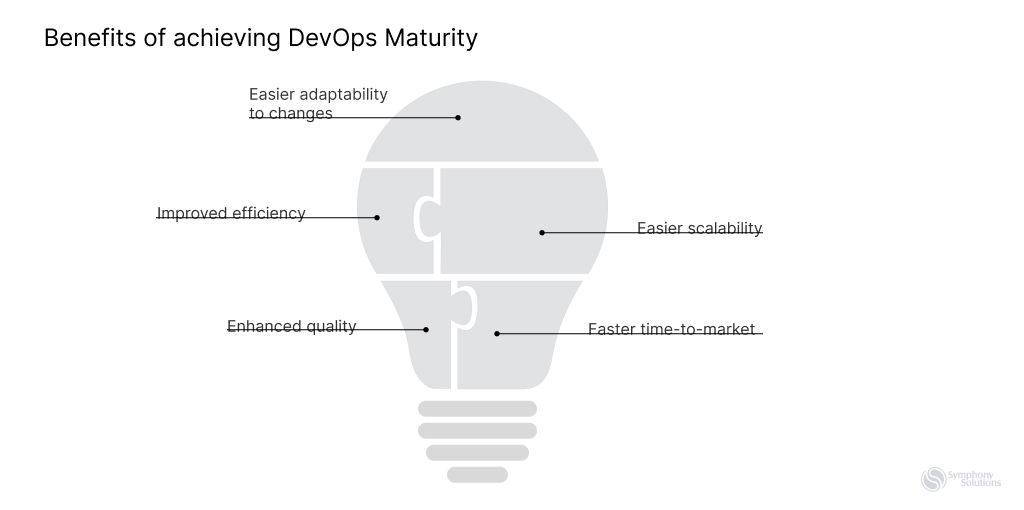

Did you know that enterprises that embrace DevOps witness a 200-fold increase in deployment frequency and a 24-fold improvement in recovery time from failures? This article aims to provide valuable insights and strategies to help enterprises successfully scale their DevOps practices and reap the numerous benefits, including enhanced software quality, reliability, and security.

What is DevOps?

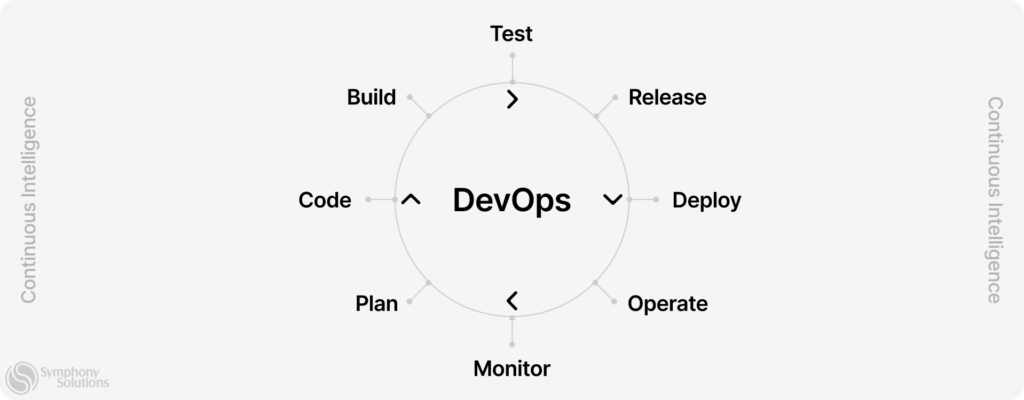

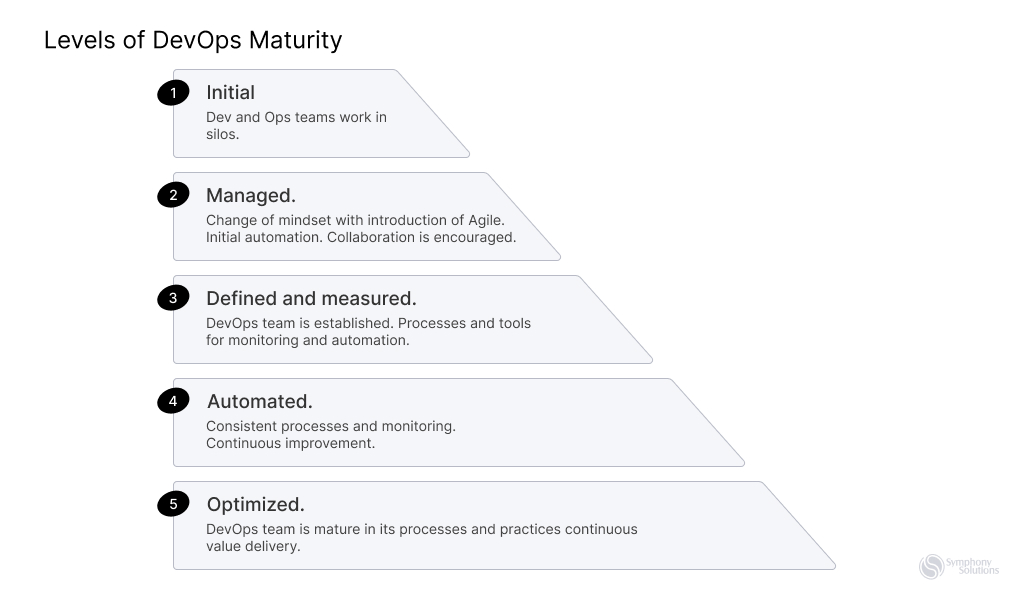

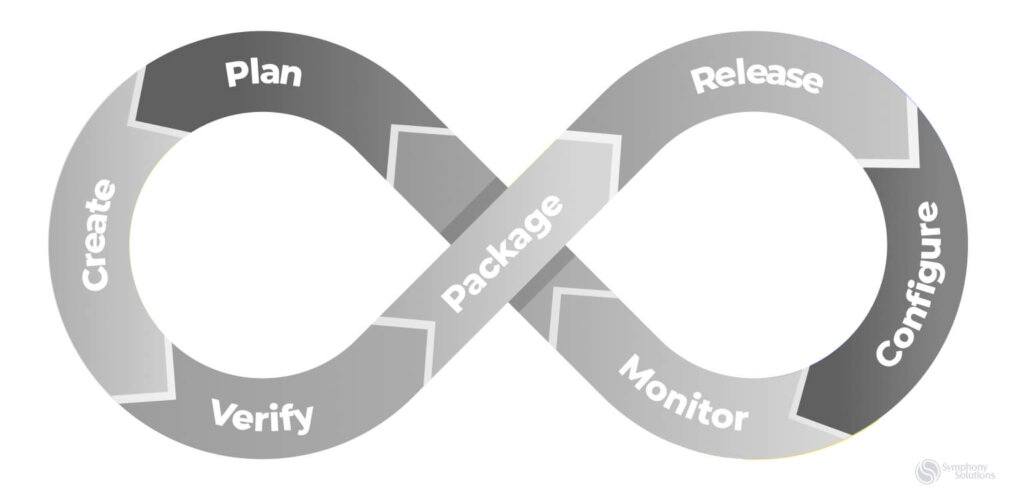

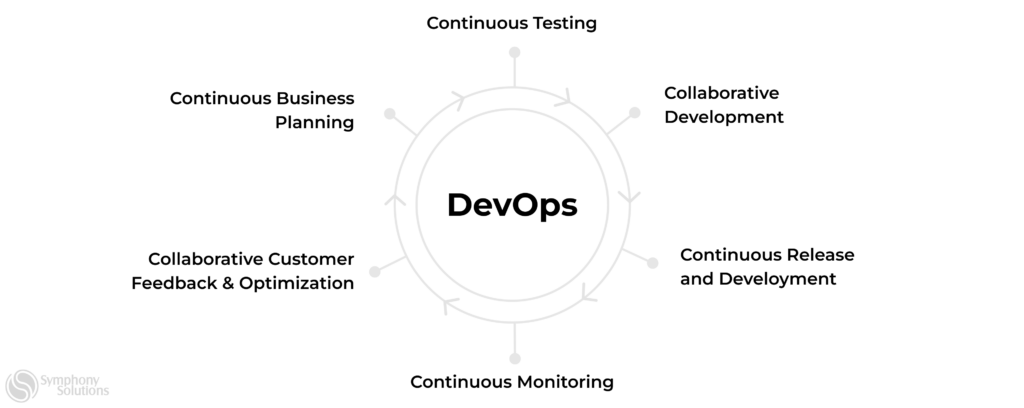

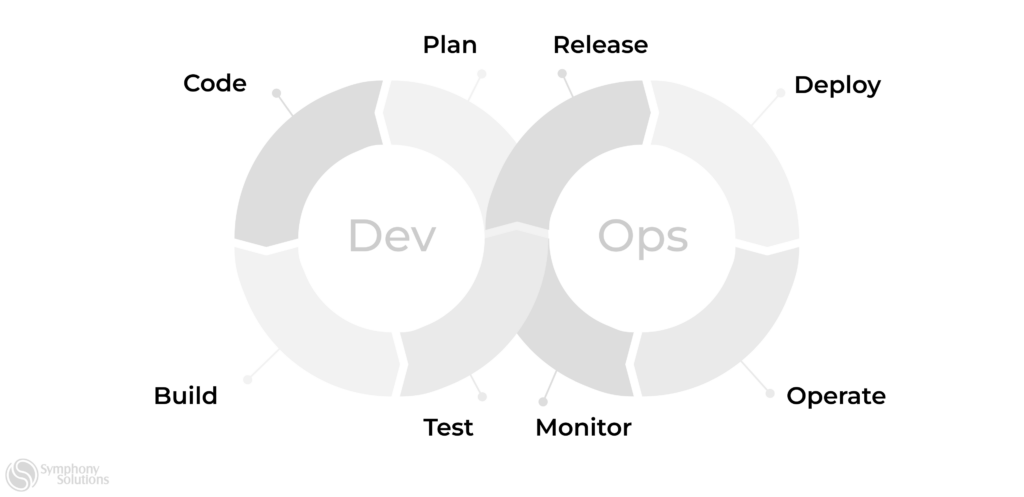

DevOps is a methodology that fosters efficient collaboration between development and operations teams. It encourages a holistic view of the IT process, with teams continuously refining their roles and responsibilities to meet the needs of the business.

DevOps is all about bringing development and operations teams together. It’s about breaking down the walls and getting everyone on the same page. Developers get involved in infrastructure decisions and deployment, and operations teams get a seat at the table from the early stages of development. The result? More reliable software, faster delivery times, and a better ability to respond to market changes.

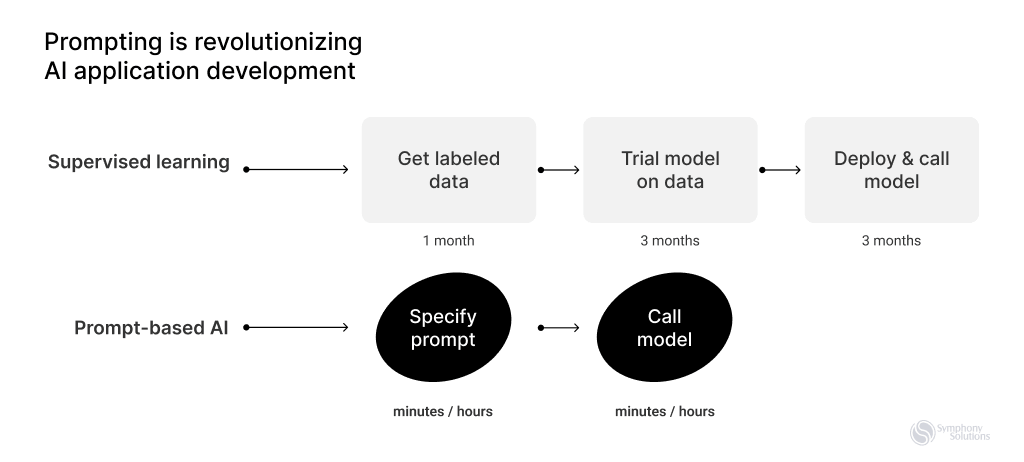

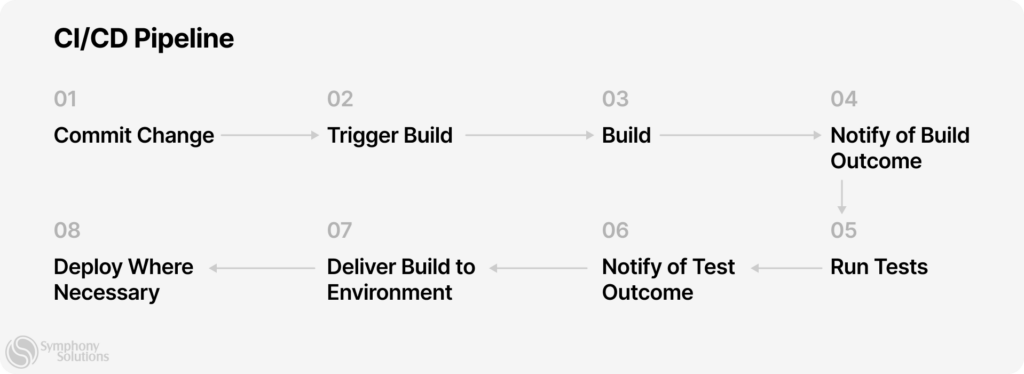

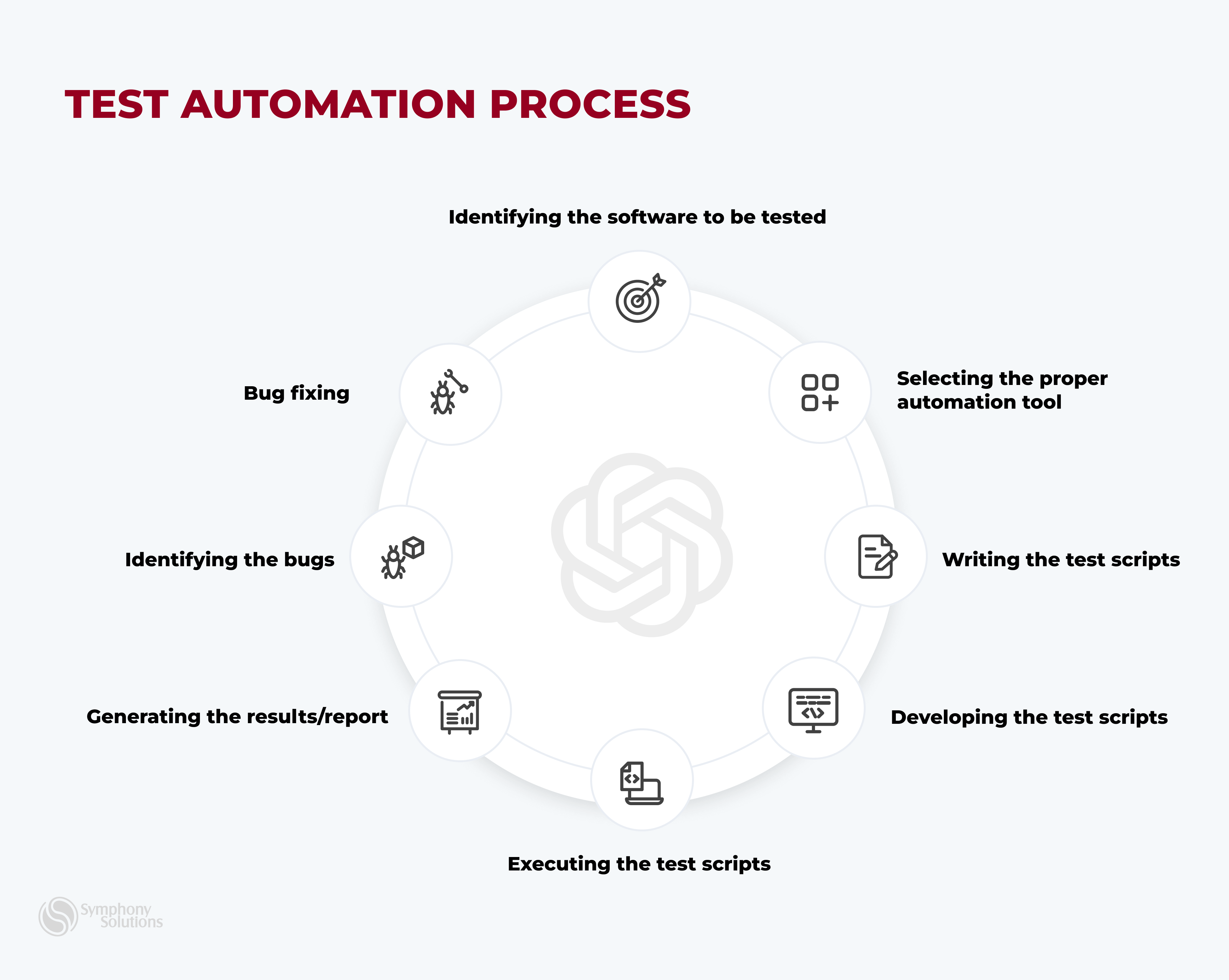

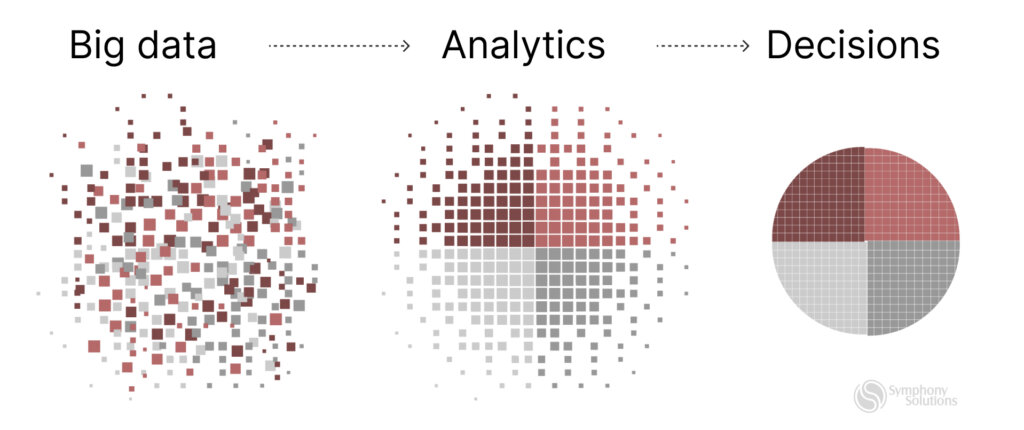

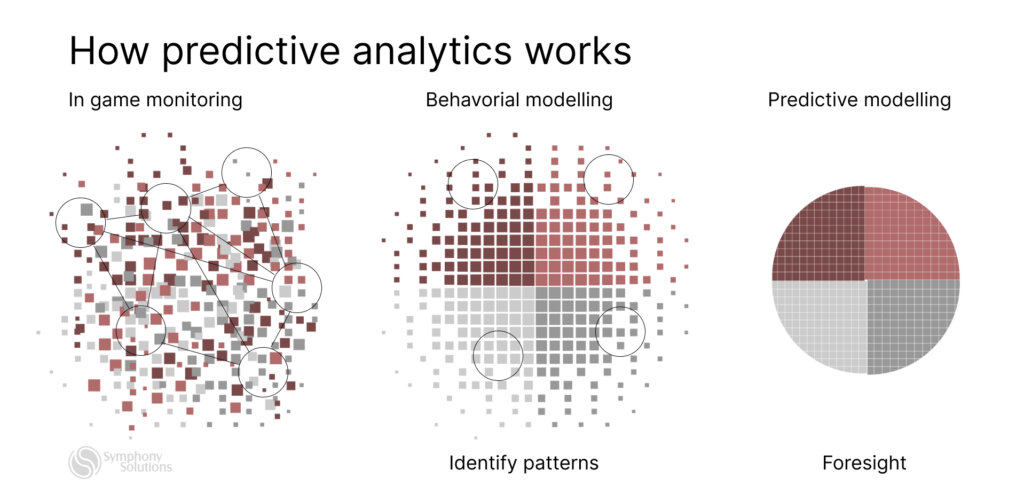

But DevOps isn’t just about collaboration. It’s also about automation and continuous improvement. Tools for continuous integration and continuous delivery (CI/CD) are key here, automating the testing and deployment of code. This cuts down on human error and speeds up the development cycle. And with regular feedback and performance metrics, teams can keep improving their processes and tools.

What does scaling in DevOps mean for an enterprise?

Scaling in DevOps is the ability of enterprises to create strategies that allow flexibility to adjust for different demand seasons. So, when there is high demand, your teams can grow to meet the pressure and then scale back when demand reduces, thanks to DevOps automation-driven background.

With its specific methods, DevOps is the ideal environment for scalability, as it lets team members interact, focus, and create innovative alternatives for faster software deployment.

Use cases for DevOps in enterprise

Here are some typical use cases for DevOps explained

Speed up new releases with DevOps

Software Company Accelerates Product Launches

Problem

The client was struggling with slow release cycles due to a traditional development approach. The lack of integration between the development and operations teams often led to last-minute issues, causing delays in product releases.

Solution

Recognizing their need for a more efficient process, the client approached us at Symphony Solutions with a challenge. We proposed the adoption of DevOps practices. Our team implemented continuous integration and continuous delivery (CI/CD) pipelines, automating the testing and deployment of their code. This not only reduced the risk of errors but also significantly sped up their development cycle.

The adoption of DevOps practices led to a 50% reduction in the client’s time-to-market. They were able to launch new products much faster, improving their competitive position in the market. The smoother and more reliable releases also enhanced their customer satisfaction. This case study demonstrates how Symphony Solutions can help companies speed up software releases using DevOps practices.

Optimize your processes with DevOps

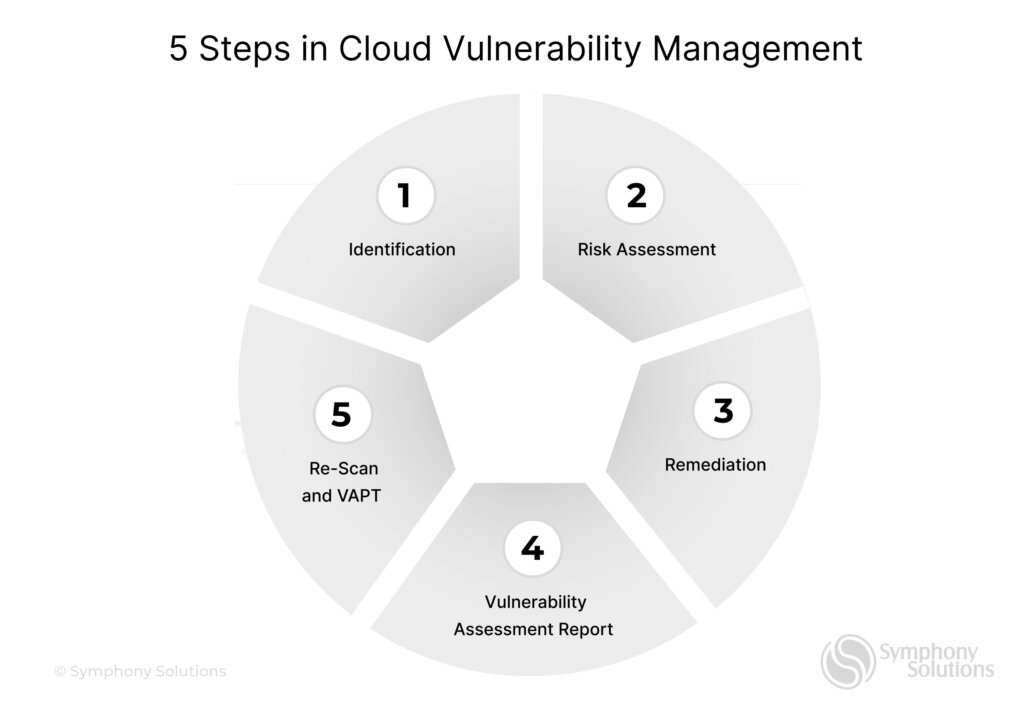

Creating Secure AWS VPN Connection with Complex Hybrid Cloud Authentication for SAP Solution

Problem

SAP required a custom solution to run cloud services with all cloud-native benefits, like pay-as-you-go for cost efficiency, high scalability and flexibility, on the client’s on-premises infrastructure for maximum data confidentiality and security.

They needed an Agile and reliable partner to work closely, efficiently, and intuitively with their in-house engineering team and subcontractors. The aim was to allow the client to focus more on their business initiatives and less on IT infrastructure management.

Solution

Symphony Solutions offered remarkable DevOps models to implement a solution to set up the AWS infrastructure and issue custom HP certificates required to establish an AWS VPN connection between the customer’s on-premises infrastructure and an external managerial system in AWS public cloud.

Symphony Solutions’ team implemented private keys infrastructure (PKI) inside of AWS to issue HP-affiliated certificates. This ensured secure and reliable connectivity between on-premises infrastructure running SAP HANA services and VMware-hosted applications.

Get to the bottom of incidents with DevOps

Cloud Solution Extends Portfolio

Problem

The client had already been in production (Compliance 1.0), developed by another vendor and relied on third-party libraries for most of its features. The unavoidable dependency was cost-intensive for the client, plus the original solution had significant issues with maintainability and scalability. Eventually, the client decided to implement version 2.0 of this product to replace the third-party service with their modern, scalable, secure, easy-to-maintain implementation.

Solution

Although the client first came to Symphony Solutions seeking help with Version 1.0 Continuous Compliance tool maintenance, the team’s excellent performance and contribution convinced the client that they actually needed a new solution to start and scale DevOps in the enterprise.

So, they decided to eliminate dependence on the 3rd party vendor and work exclusively with Symphony Solutions on developing an entirely new Version 2.0 architecture.

The teams at Symphony Solutions worked with the client to determine a staged process to:

- Conduct a set of automated tests to assess the current state of the product.

- Develop a new solution for cost-effective, timely and secure development.

- Plan and develop Version 2.0 architecture.

- Research to determine the best ways to implement necessary functionality.

In 2020 Symphony Solutions was chosen to substitute another vendor in delivering one more service to HPE GreenLake: Continuous Cost Control. Having shown great diligence and high delivery quality, they were again chosen to take over the project replacing the other vendor’s team.

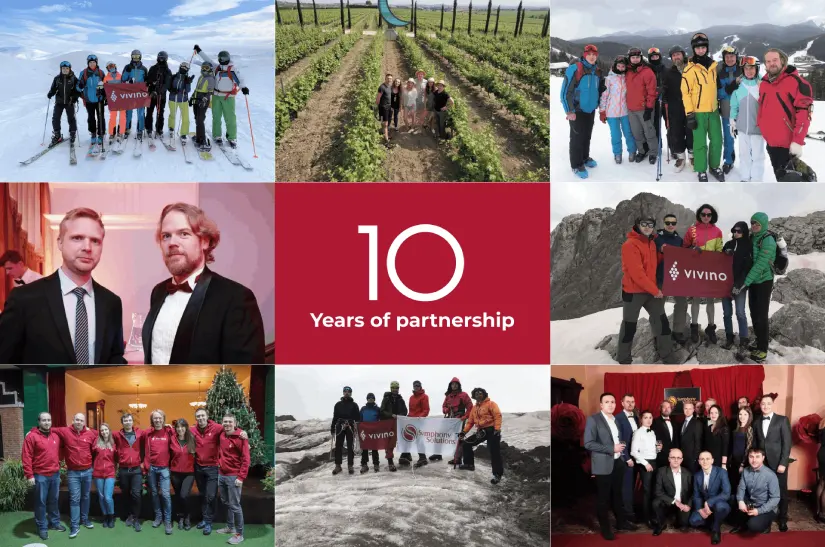

Cloud Engineering Powers Business

Problem

Vivino was a small online wine marketplace start-up with an original idea from Danish founders Heini Zachariassen and Theis Sondergaard in 2010. Unfortunately, they did not have the technical expertise to build a prototype for their product. They needed quick turn-around from engineers to cover the entire product development cycle.

Solution

Vivino came to Symphony Solutions in 2013. The Symphony Solutions extended team provided Vivino with Cloud engineers who developed a process to go from idea to product and then created services for the new wine app. They:

- Designed the architecture and infrastructure to accommodate the growing database of wines, users, ratings, and prices

- Migrated the codebase from PHP to GoLang

- Developed new backend and UI features

- Worked on database modelling and integration with new services

- Supported and maintained data pipelines and processes

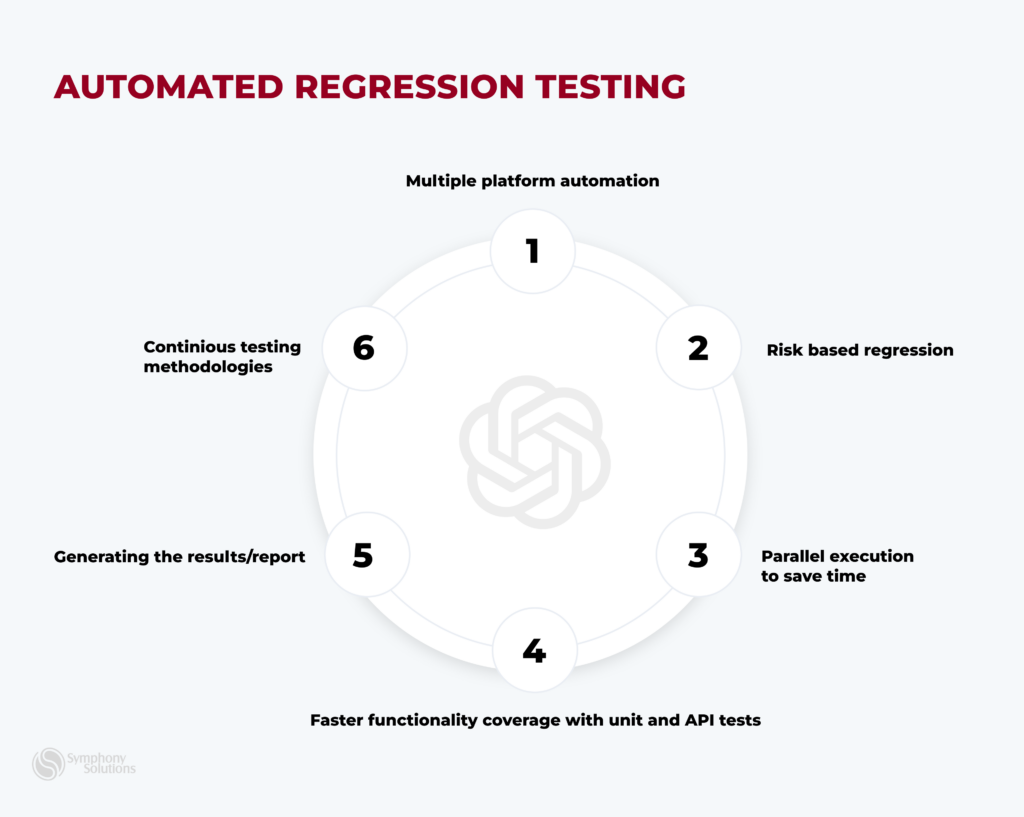

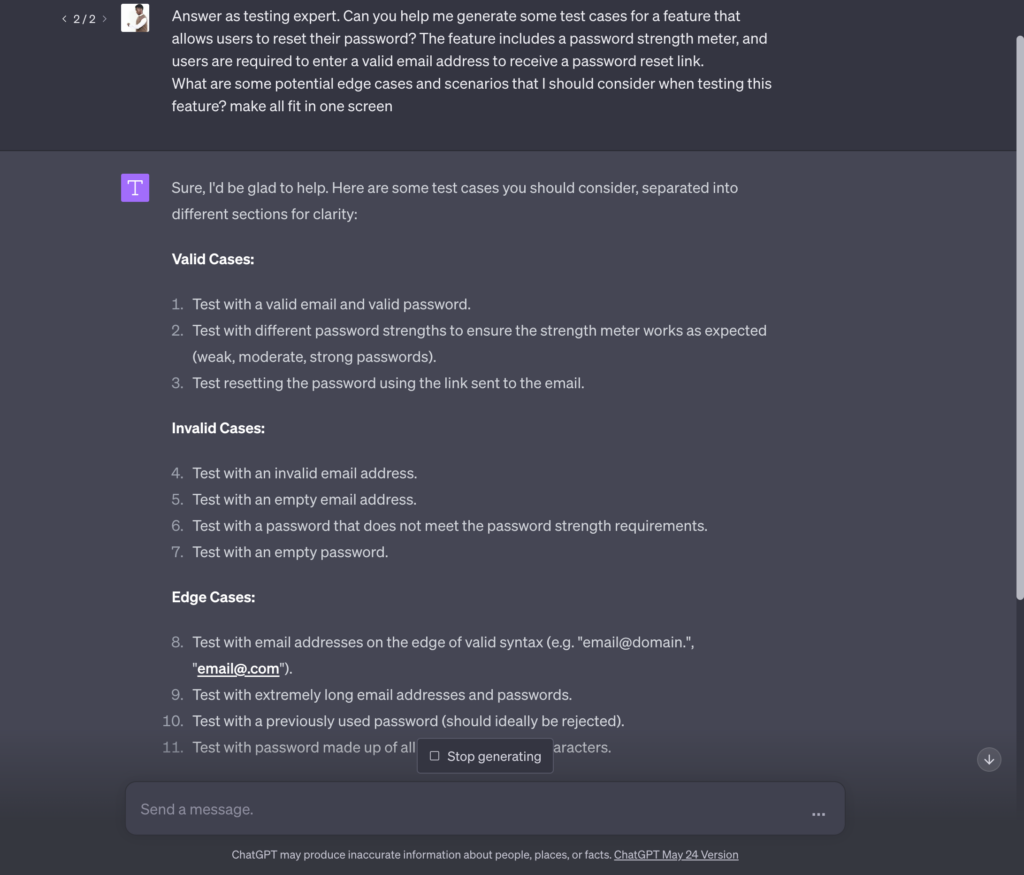

Once developed, the solutions need to be tested to minimize risks of broken software, and Symphony Solutions managed the QA process of manual and automation testing.

Retail Banking Powered by Salesforce

Problem

The client needed to modernize the outdated version of the client portal to keep up with the ever-growing demand and high industry standards. As one of the largest commercial banks on the market, they needed a fast and steady solution, maintaining high security and providing customers with a full spectrum of services while implementing new features and functionality.

Solution

Symphony Solutions opted for building an entirely new portal from the ground up after thorough examination. Here’s what they did:

- Designed a highly configurable portal that’s easy to maintain from an Admin perspective.

- Lightning, Aura and Web components were used to implement UI in Salesforce, creating a custom theme and content layouts for easy navigation and enhanced functionality.

- Single Sign-on configurations for 3rd-parties via Onegini Identity Provider.

- Implemented High Assurance functionality and features that require multi-factor authentication, enabling the user to perform high-security financial transactions.

As an Agile company, Symphony Solutions simultaneously conducted different stages of the development process, maintaining good cooperation with other teams and bringing the project to completion in record time.

Steps to Scaling DevOps in the Enterprise

You can follow these steps for scaling development teams in your business

Define what DevOps means for your enterprise

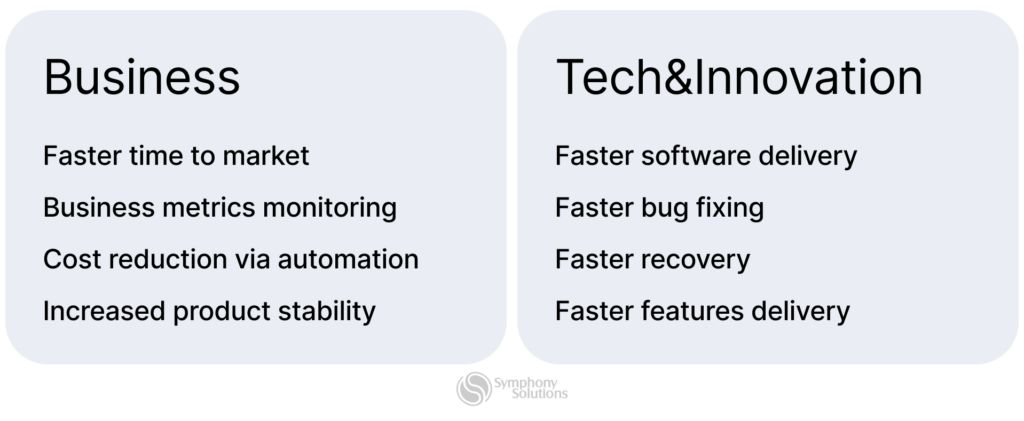

Companies view DevOps differently on what they need from the agile practice. So it would be best to determine the benefits the system can offer your enterprise.

Do you want to:

- Improve your app code’s quality?

- Create a self-sufficient work culture?

- Encourage seamless communication and streamline collaboration?

Your answer will guide you on what metrics to track as you scale DevOps.

Acquire top talent

The increase in demand for DevOps teams has resulted in high demand for jobs in the field, and most top talent want to work with flexible and innovative companies.

So, consider hiring a DevOps enthusiast to incorporate DevOps processes in your organization. The evangelists collaborate with architects and industry experts to change new technologies, and business needs to company-specific software designs.

Perform an initial stock-take

Identify the existing features in your company that support a DevOps culture, like DevOps-trained staff. Then, document, highlight, and improve these existing deployment channels to serve as launch pads for your team to find efficiencies and deliver more substantial business results.

Track Dev-Ops metrics

As you scale your business process, there should be metrics that you monitor to track your DevOps performance. Select the relevant metrics that align with your definition of DevOps in the enterprise. These are some of the standard metrics to look out for:

- Deployment frequency

- Deployment time

- Lead time

- Customer tickets

- Automated test pass %

- Defect escape rate

- Error rates

- Application usage and traffic

- Application performance

- Mean time to detection (MTTD)

- Mean time to recovery (MTTR), etc.

Pre-empt the culture shift

Growing enterprises often boast large, independently structured teams. So the business department may need help understanding the intricacies of DevOps practices. Anticipating and preparing for a culture shift will help business leaders understand the emphasis on collaboration across teams to develop and support innovative software.

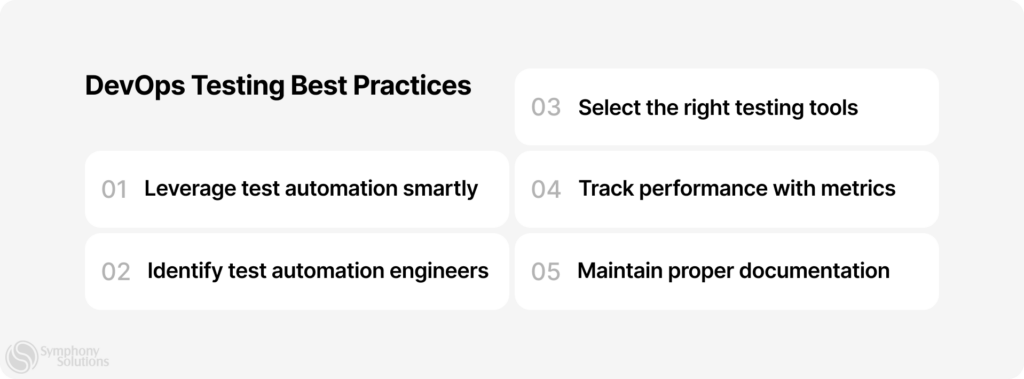

Best Practices for Scaling DevOps in enterprise specifically

DevOps can pose a challenge, especially for larger companies, due to the complex siloes that should be simplified between different IT departments. But regardless of your enterprise’s status in DevOps implementation, these best practices for scaling will come in handy.

Develop Standardized Project Templates and Policies

DevOps teams should emphasize standardization for all DevOps projects, as that is the most integral practice for creating long-term efficiencies in development.

You can achieve enterprise DevOps standardization by building and sticking to project templates and policies. Most DevOps tools offer these built-in templates that automate the seamless accessibility of process data and promote ease of interpretation so successful projects can be repeated and scaled.

Policy standardization is also essential as it guides projects and associated tools, ensuring they adhere to appropriate security and regulatory requirements throughout development.

Create Interdepartmental Goals to Bust Silos

Most enterprises that can afford to have dedicated tech-driven teams like IT, security, or operations often notice these teams have unique roles and different workflows. This also means that they work independently even if they receive shared projects.

Even though the siloed approach may be practical for specific projects, there may be underlying expenses, mistakes, and time lost. So, for efficient DevOps implementation, you can create interdepartmental goals with tasks and metrics that encourage them to collaborate for better, holistic results.

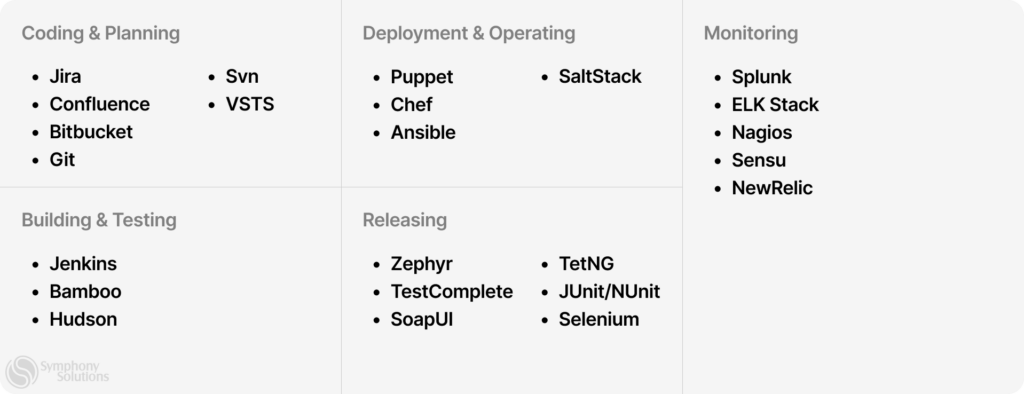

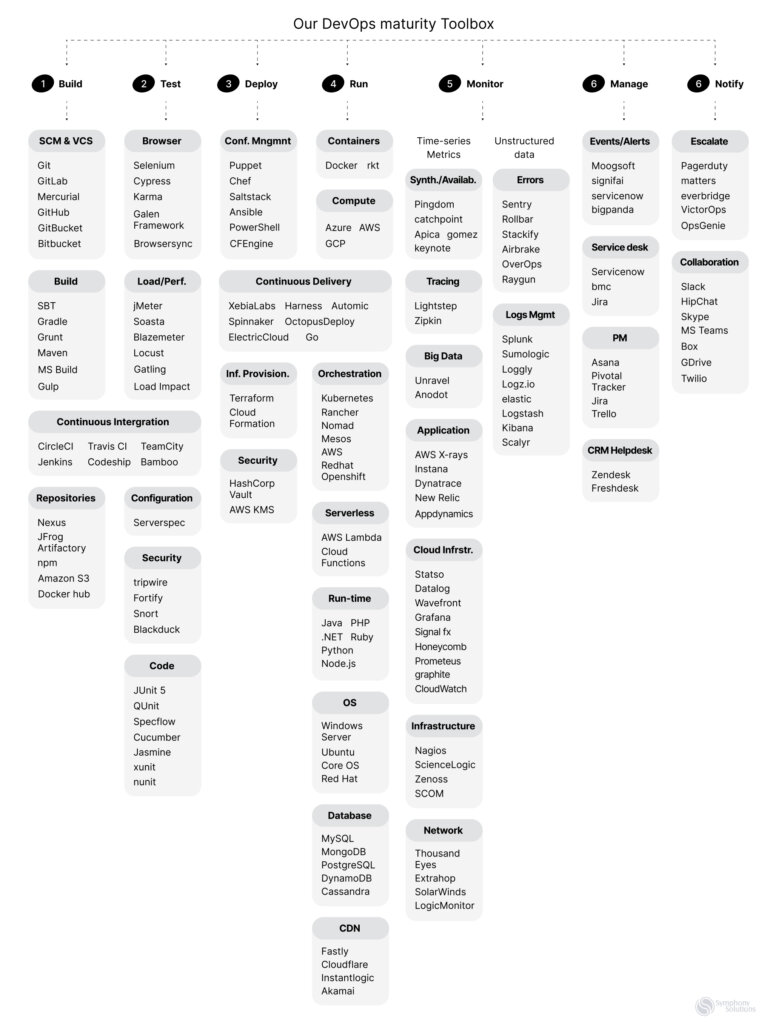

Use DevOps Tools to Support Team Goals

You can employ dedicated tools like the DevOps tools to automate, collect, document, and highlight individual DevOps projects. Consider getting these additional tools and resources for solutions in these categories to support your objectives:

- Application performance monitoring

- Container management

- Configuration management

- Data cleansing and data quality management

- Project management

- CI/CD and workflow automation

- Collaboration and communication

- Version control

Rely on Continuous Integration and Continuous Delivery (CI/CD)

The most fundamental principles of DevOps for enterprises are reducing the delivery lifecycle and releasing iterations of a product regularly to improve agility. This continuous integration and continuous delivery (CI/CD) approach ensures quick processes and instant feedback to improve existing features and streamline processes for a pivot or change in a project plan.

You do not have to release the “perfect product” with a truckload of features at a go. It could cause issues for your development team and users like:

- Users will not get updates quickly and may have to wait for batch releases.

- Users can’t test and give feedback on new features due to the prolonged launch.

- Having a ton of features at once increases the project’s scope, so it may be more challenging if developers need to fix any bugs or issues after launch.

Pay Attention to User Experience (UX)

User experience (UX) is essential in any DevOps project development, especially since UX feedback informs the project iteration plans. You can get genuine UX needs from non-technical team members (third eyes) that could also be a part of the team.

You can try surveys, ticketing systems, user experience discussion forums, or interdepartmental meetings to get the relevant feedback you need to proceed with the perfect user design.

Incorporate Change Management into All DevOps Projects

Integrate the best practices of change management into new development and release cycles so the teams utilize the tools properly and efficiently, especially for security and regulatory compliance needs.

A solid change management support strategy can ensure successful DevOps releases, like offering a Q&A forum or ticketing system for new users, creating documentation and extra training, and retaining a DevOps task force to can assess the tool integration.

End Note

DevOps implementation will continue to evolve, even as your enterprise grows. However, to keep enjoying the maximum value for your business, you must continuously experiment with new processes, skills, and tools to identify those that can produce the highest potential integration across the whole DevOps toolchain.

Now, that level of flexibility for DevOps can pose a challenge for any enterprise scaling development teams with other business tasks to juggle. That’s where Symphony comes in!

We provide top-notch DevOps services to enterprises worldwide. So contact us today and let our professional DevOps teams build, test, and launch reliable products in record time.