In iGaming, platform choice is one of those decisions that feels harmless at the beginning, until it starts showing up in your P&L. You don’t notice it on day one. You notice it when margins get tight, when vendors push price changes, when product changes take longer than they should, or when scaling into new markets turns into a negotiation instead of a decision.

What starts as iGaming platform infrastructure decision often turns into a structural advantage or a long-term constraint. Platform economics influence how much revenue you actually keep, how quickly you can adapt to market shifts, how exposed you are to vendor pricing and roadmaps, and how costly it becomes to scale across brands, regions, or verticals.

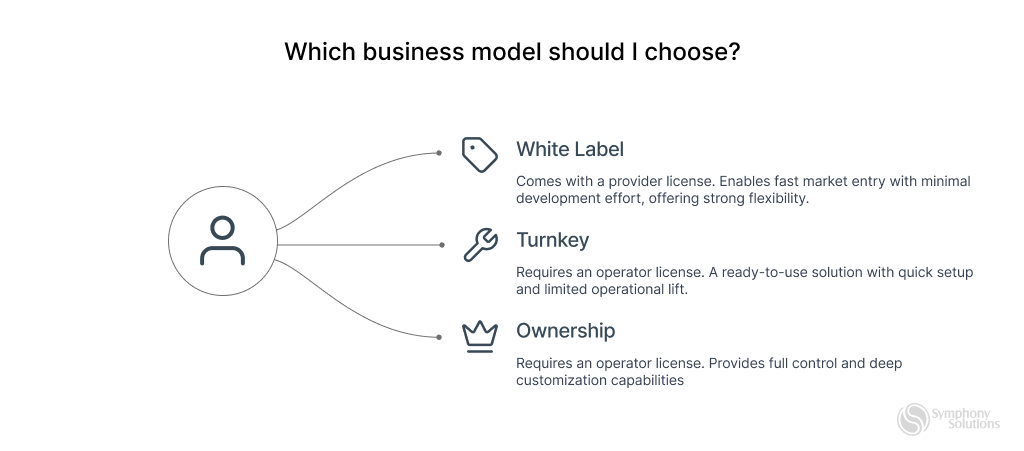

- White label typically optimizes for speed and simplicity, but can limit margin and strategic control over time.

- Turnkey offers more structure and customization, while still tying growth to vendor priorities.

- Ownership or no-rev-share sportsbook and casino demands more upfront investment, yet unlocks deeper control, flexibility, and long-term profit potential.

This guide is built for operators at any stage. Whether you are actively running a sportsbook or casino, evaluating a platform migration, renegotiating commercial terms, planning multi-brand expansion, or simply pressure-testing your current setup, this is a practical deep dive into the real economics behind iGaming platform models.

Let’s break it down.

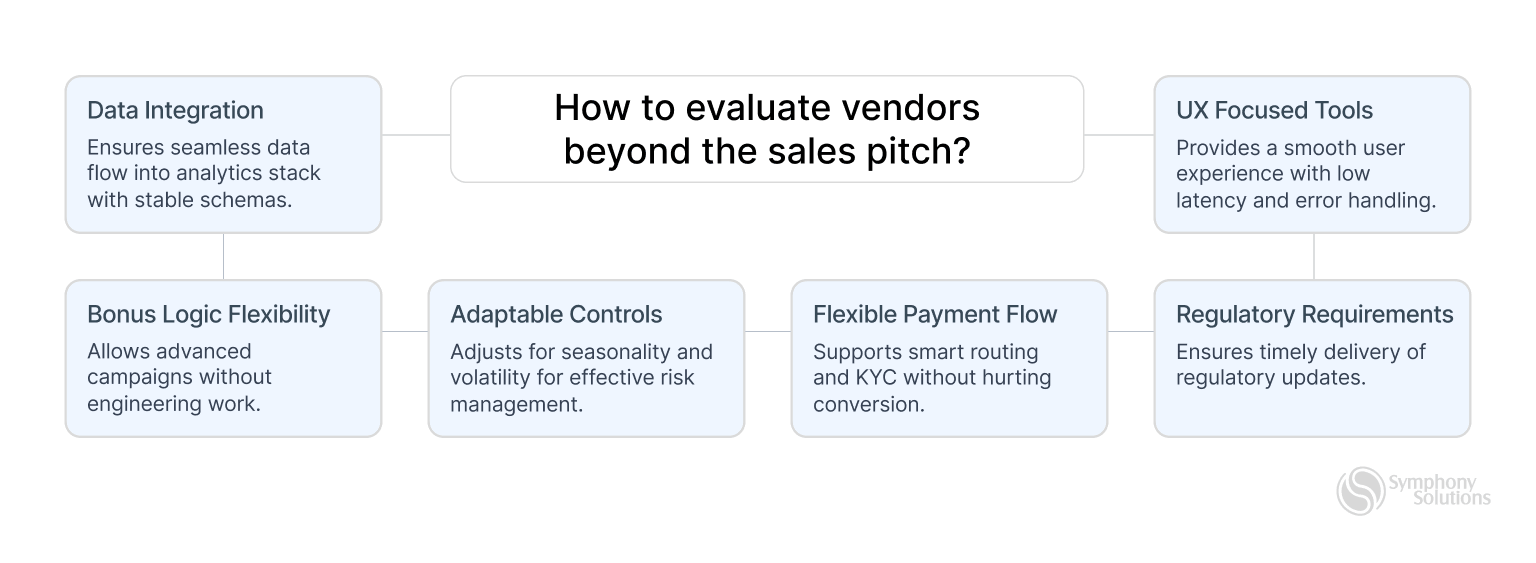

How to Evaluate Vendors Beyond the Sales Pitch

Sales decks highlight features. Experienced operators look at execution, flexibility, and operational depth. Key questions worth asking include:

- Does event data flow cleanly into your analytics stack with stable, well-documented schemas?

- Will the platform integrate seamlessly with required tools, sports feeds, game providers, and other critical systems?

- How flexible is bonus logic, can you run advanced campaigns without engineering work?

- How adaptable are trading and risk controls to seasonality and volatility?

- Is the payment flow flexible and configurable to your specific operational and regulatory needs?

- Does the vendor have a proven track record of shipping regulatory updates on time?

- Are UX fundamentals strong, latency, error handling, mobile flow, and friction control?

In practice, small operational details often have a bigger impact on revenue and margin than headline features.

White Label vs Turnkey vs Ownership: How Each Model Actually Works

Ownership / No-Rev-Share Sportsbook and Casino

What no-rev-share sportsbook and casino Actually Means

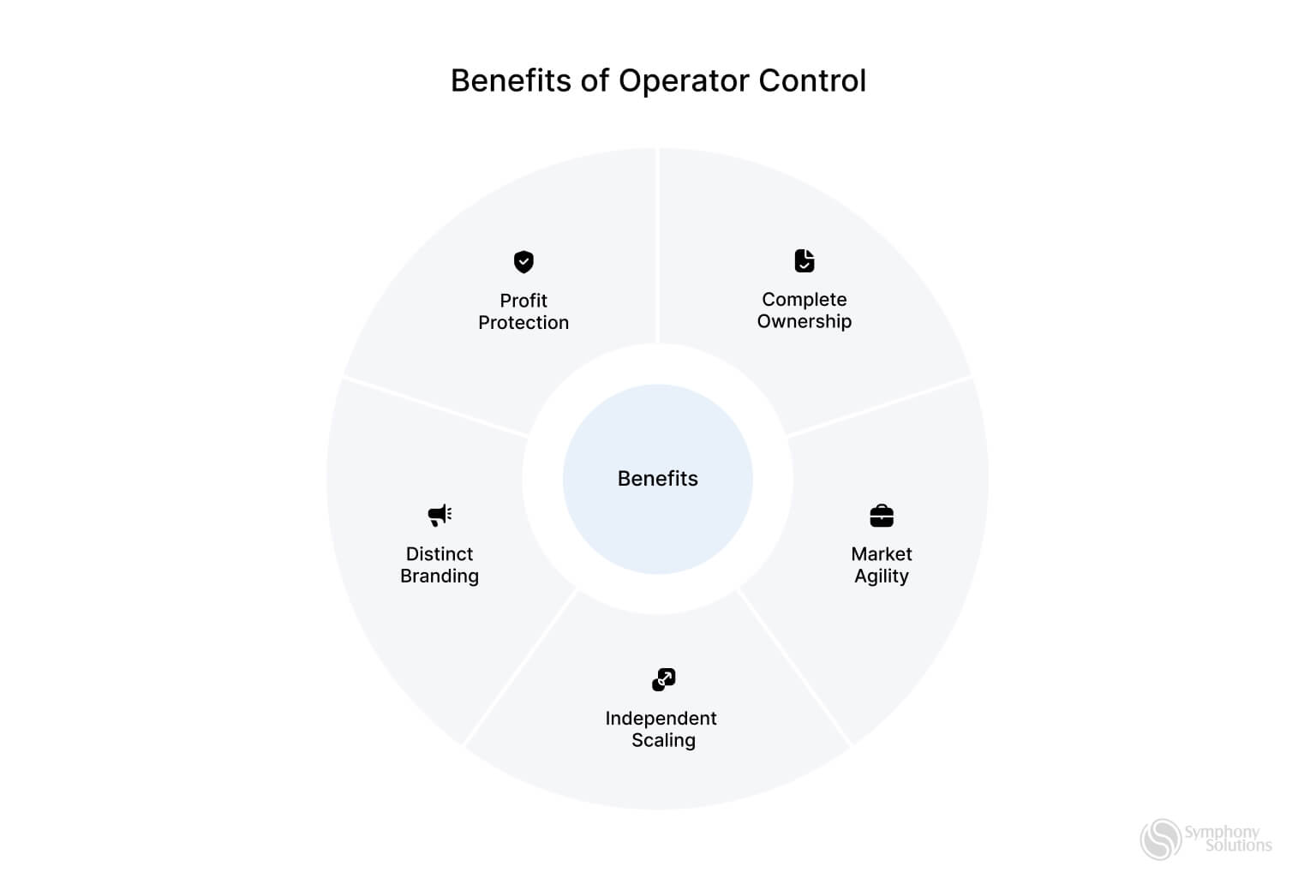

Under a no-rev-share sportsbook and casino model, the operator fully owns and controls the platform. This typically includes access to source code (or escrow), infrastructure, deployments, product roadmap, integrations, pricing logic, and player data. This removes reliance on vendor-imposed limits or embedded revenue share.

Compared to white label and turnkey, ownership shifts more responsibility in-house, but unlocks greater margin control, deeper product flexibility, and long-term strategic independence. It empowers operators to build true market differentiation, stand out clearly from competitors, and shape a product experience that reflects their unique brand. The platform evolves from a rented tool into a core strategic asset that drives sustainable competitive advantage.

Why Operators Choose Ownership

Operators usually explore ownership when platform fees start meaningfully impacting profitability or when vendor constraints slow innovation or differentiation.

Common drivers include:

- Reducing long-term revenue share and improving GGR retention

- Gaining control over sportsbook logic, risk, CRM, loyalty, and promotions

- Building a more distinctive product and brand experience

- Increasing leverage with suppliers and payment providers

- Owning player data to improve retention, segmentation, and LTV (Lifetime Value)

- Strengthening positioning for fundraising, M&A (Mergers and Acquisitions), or exit opportunities

When paired with a clear roadmap, ownership becomes a lever for margin optimization, faster iteration, and long-term control.

Ownership in Practice: Benefits vs Trade-Offs

| Benefit | Enables | Requires |

| Better margin retention | Lower or no platform revenue share | Managing and operating the platform in-house |

| Full roadmap control | Shorter time to market and faster adaptation to market needs | In-house product expertise supported by clear, disciplined prioritization |

| Deeper customization | Proprietary trading, CRM, and loyalty systems tailored to your specific business needs | Ongoing dev & QA capacity |

| Lower vendor dependency | Greater commercial leverage | Internal accountability |

| Stronger valuation narrative | Higher investor confidence | Governance and cost transparency |

Who Ownership Is Best Suited For

Ownership tends to work best for operators with scale, internal capability, and a long-term product vision, where deeper control translates into real business value.

| Operator Profile | Fit | Why |

| Established operators with revenue | Strong | Platform costs impact EBITDA |

| Product-led brands investing in differentiation | Strong | Enables proprietary UX and logic |

| Multi-brand or multi-market groups | Strong | Greater flexibility at scale |

| Operators planning fundraising or M&A | Strong | Platform control improves valuation |

| Teams with mature product & tech capacity | Strong | Better equipped to manage complexity |

| Early-stage teams focused on speed | Weaker | Higher cost and longer timelines |

| Teams with limited technical leadership | Weaker | Higher execution risk Note: Depends on the development partner. With an experienced domain team like Symphony Solutions, risks are minimized. |

| Operators wanting fully managed platforms | Poor | Ownership requires hands-on control |

Top 3 Ownership / Source-Code Model Providers

BETSYMPHONY

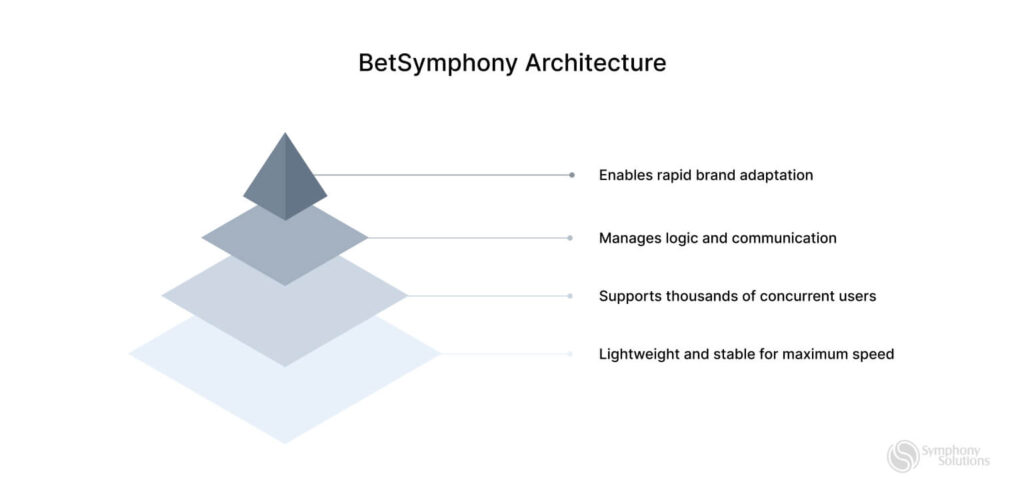

BetSymphony is an ownership-focused iGaming platform with sportsbook and casino developed by Symphony Solutions, designed for operators who want full control over their technology, product roadmap, and margins.

The platform is built around source-code ownership, zero revenue share, and deep customization, enabling teams to shape frontend experience, trading logic, integrations, and monetization without vendor constraints.

While ownership models typically require strong in-house technical expertise, BetSymphony is backed by Symphony Solutions as a reliable technology partner. This ensures operators gain full control without carrying the entire technical burden alone.

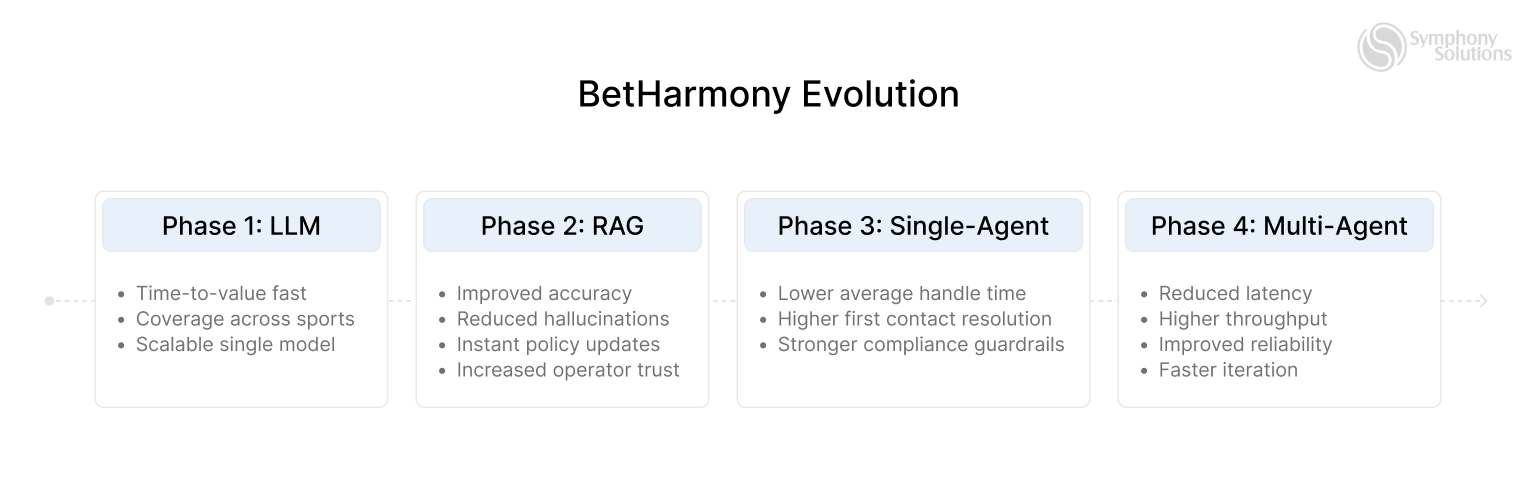

- Key Features: Full source-code ownership, iGaming platform with sportsbook and casino. It comes with pre-match and live betting, customizable frontend and backend, multi-brand and multi-market support, bonus engine, and AI-powered engagement via BetHarmony.

- Licensing: Built to support regulated-market operations, with configurable compliance controls such as responsible gaming, limits, and jurisdiction-specific rules; licensing depends on operator and region.

- Strengths: No revenue-share model, full roadmap autonomy, deep customization flexibility, modern scalable architecture, strong positioning for margin optimization and long-term platform independence.

- Weaknesses: Requires stronger internal product and technical capability than turnkey or white label; higher upfront commitment compared to vendor-managed models.

Note: Product development and technical execution are covered by Symphony Solutions, while licensing and operational management are handled by the operator.

- Ideal For: Operators seeking long-term ownership, margin control, and freedom to build a differentiated iGaming product without vendor lock-in.

- Pricing: License-based commercial model with custom pricing depending on platform scope, integrations, and ownership structure.

IQ SOFT

IQ Soft is an Armenia-based iGaming technology provider offering casino, sportsbook, and multi-channel platform solutions with a strong focus on operator independence. The company positions itself around flexible business models, including turnkey, revenue share, and source-code ownership options, while supporting online, retail, and hybrid betting operations across multiple regions.

- Key Features: Core iGaming platform, sportsbook solution with live and pre-match betting, casino engine and game aggregation (30,000+ games), agent and affiliate system, bonus and gamification tools, crypto and blockchain-enabled products.

- Licensing: Supports operations across multiple jurisdictions and assists operators with regulatory and licensing requirements depending on market and business model.

- Strengths: Strong focus on platform ownership and independence, broad product suite covering casino, sportsbook, and retail, extensive game and payment aggregation, flexible commercial models.

- Weaknesses: Brand visibility is lower compared to tier-one global providers; platform documentation and onboarding experience may vary by region and project scope.

- Ideal For: Operators seeking flexible platform ownership options, agent-based business models, or multi-channel betting solutions across online and retail environments.

- Pricing: Custom commercial terms based on chosen business model, platform modules, integrations, and licensing needs.

QUANTUM GAMING

Quantum Gaming is an iGaming platform provider specializing in sportsbook-focused solutions, with additional casino and player management capabilities. The company emphasizes risk management, trading flexibility, and scalable infrastructure designed to support both emerging and regulated markets, with a focus on performance, customization, and operational control.

- Key Features: Sportsbook platform with pre-match and live betting, trading and risk management tools, casino integration and game aggregation, player account management (PAM), CRM and bonus systems, multi-currency and multi-language support.

- Licensing: Supports operators across various jurisdictions and can assist with compliance and licensing requirements depending on market and regulatory framework.

- Strengths: Strong sportsbook and trading focus, flexible risk and odds management, customizable platform architecture, scalable infrastructure for growth across multiple regions.

- Weaknesses: Smaller market presence compared to tier-one global providers; casino and non-sports modules may be less extensive than sportsbook-centric competitors.

- Ideal For: Operators prioritizing sportsbook performance, risk control, and platform flexibility, especially in growth-stage or emerging markets.

- Pricing: Custom commercial terms based on platform scope, sportsbook depth, integrations, and regulatory requirements.

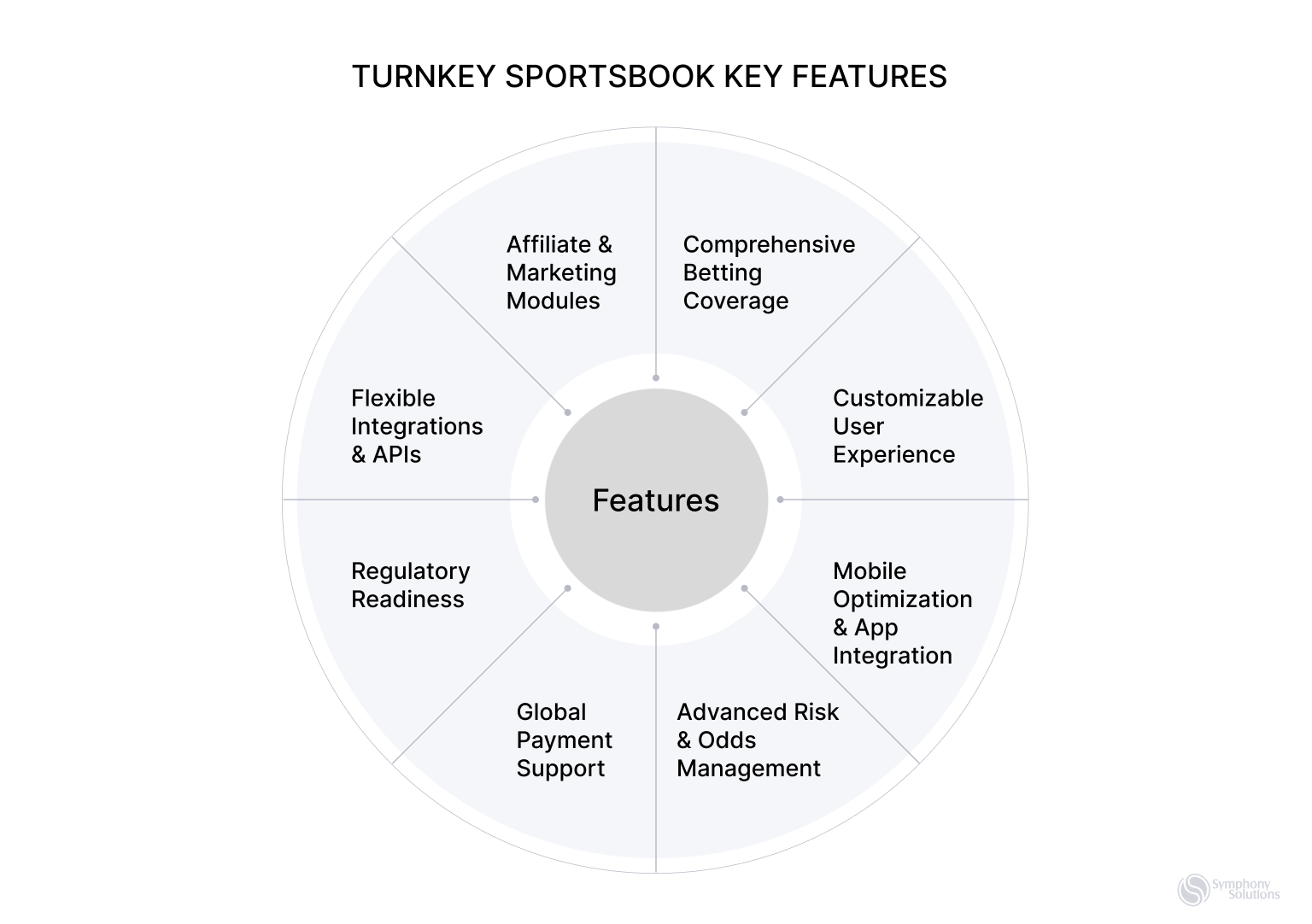

Turnkey Model

What Turnkey Actually Means

A turnkey model means the operator runs the business, while the vendor runs most of the technology. The platform usually comes with sportsbook, casino, payments, CRM, hosting, and compliance ready out of the box, letting teams focus on branding, marketing, and growth instead of infrastructure.

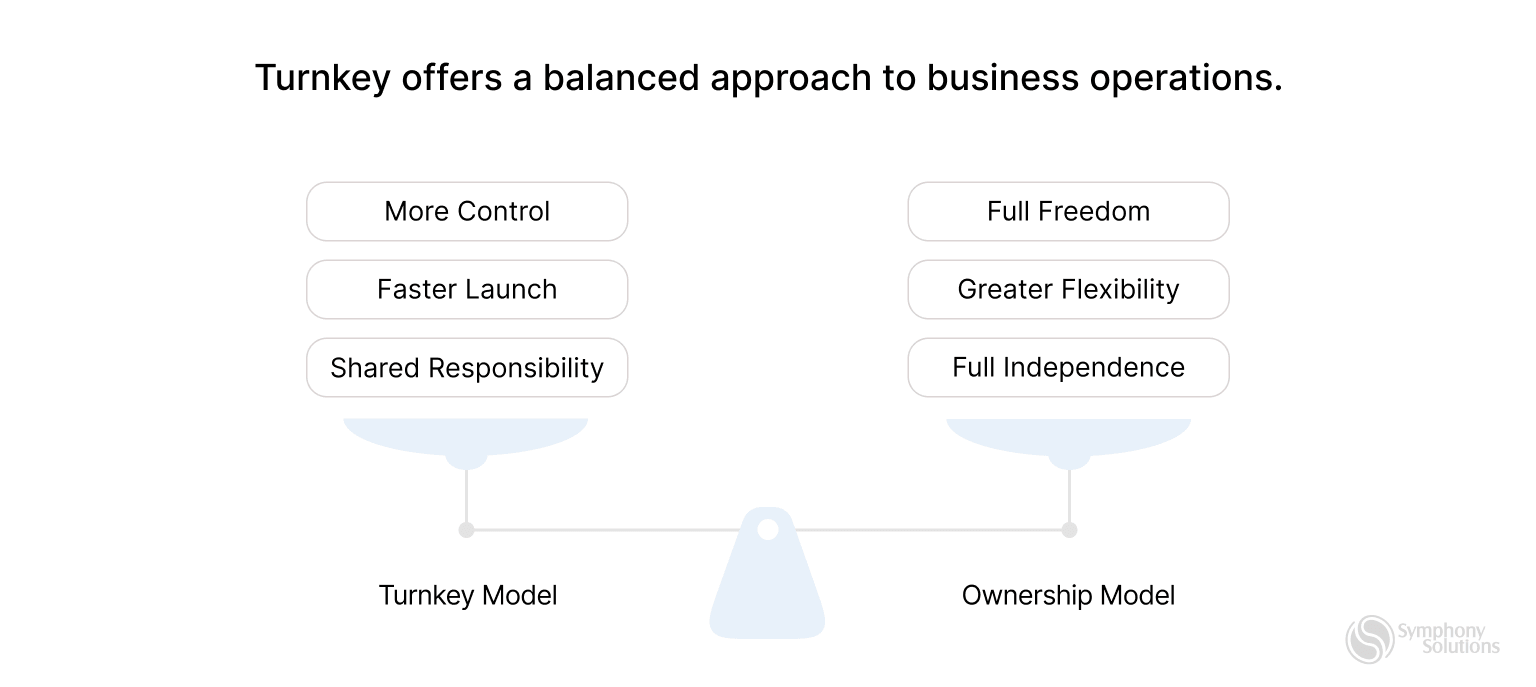

Turnkey offers more control than white label, but less freedom than ownership. In practice, it’s a middle ground that prioritizes faster launch, reasonable flexibility, and shared responsibility without full platform ownership.

Why Operators Choose Turnkey

Operators typically choose turnkey when they want faster time-to-market than ownership but without giving up full business control like in white label.

Common motivations include:

- Launching faster without building a platform in-house

- Retaining control over bonuses, PSP routing, compliance, and promotions

- Expanding into multiple regulated markets with vendor-supported tooling

- Reducing technical overhead while keeping brand and commercial independence

- Accessing established sportsbook and casino ecosystems

- Scaling operations without fully internalizing engineering and DevOps

When aligned with the right growth stage, turnkey can offer a balanced mix of speed, structure, and control.

Turnkey in Practice: Benefits vs Trade-Offs

| Operator Benefit | What It Enables | What It Requires |

| Faster launch | Shorter implementation timelines | Provider roadmap dependency |

| Moderate margin control | Less rev share than white label | Platform and supplier fees |

| Operational simplicity | Vendor-managed infrastructure | Limited deep customization |

| Multi-market readiness | Easier regulatory expansion | Reliance on vendor compliance updates |

| Lower technical burden | Smaller in-house tech team | Less control over platform internals |

Who Turnkey Is Best Suited For

Turnkey tends to work best for operators who want to scale efficiently without fully owning the platform stack, especially when speed and regulatory readiness matter.

Turnkey Fit by Operator Profile

| Operator Profile | Compatibility | Why |

| Growth-stage operator expanding across markets | Strong | Faster rollout with vendor-supported compliance |

| Operator launching multiple brands | Strong | Shared infrastructure with lower overhead |

| Teams with limited engineering capacity | Strong | Provider manages platform complexity |

| Operator prioritizing speed and stability | Strong | Faster launch with predictable operations |

| Mature operator optimizing for full margin control | Weak | Platform fees may limit long-term margins |

| Product-led brand seeking deep customization | Weak | Provider roadmap can constrain innovation |

| Operator wanting full platform ownership | Poor | Turnkey remains vendor-dependent |

Top 3 Turnkey Platform Providers

Soft2Bet

Soft2Bet is a Malta-based iGaming platform provider offering turnkey and white label solutions for online casino and sportsbook operators. The company is known for its strong presence in regulated markets, its proprietary MEGA gamification engine, and a modular platform designed to support rapid multi-market expansion and localization at scale.

- Key Features: Turnkey casino and sportsbook platform, MEGA gamification engine for retention and engagement, large casino and sportsbook content coverage, multi-brand and multi-market support, advanced CRM and bonus tooling, broad localization and language capabilities.

- Licensing: Holds and supports operations across multiple regulated jurisdictions, including Malta, Sweden, Denmark, Greece, Romania, Italy, Ireland, Ontario (Canada), and others; assists partners with licensing depending on market.

- Strengths: Strong gamification and retention layer (MEGA), experience operating in regulated markets, scalable multi-brand infrastructure, solid sportsbook and casino coverage, growing global footprint.

- Weaknesses: Platform complexity may be higher for small or early-stage operators; some features and gamification layers may require additional integration effort depending on setup.

- Ideal For: Operators planning rapid multi-market expansion who value gamification, localization depth, and a platform built for regulated environments.

- Pricing: Custom commercial terms based on platform scope, licensing, content coverage, and operational requirements.

Uplatform

Uplatform is an iGaming platform provider focused on helping operators launch across multiple markets and scale quickly with both casino and sportsbook products. The platform emphasizes localization, content depth, and operational tooling designed to support expansion in regulated and emerging regions, with broad coverage across sports events, casino games, languages, and payment methods.

- Key Features: Turnkey sportsbook and casino platform, coverage of 1.5M+ pre-match and live sports events annually, 16,500+ casino games from 200+ providers, support for 65+ languages, 500+ payment methods, affiliate and agent scheme tooling for multi-market growth.

- Licensing: Supports operators entering regulated and emerging markets and may assist with licensing and compliance depending on jurisdiction.

- Strengths: Strong localization capabilities, extensive sportsbook and casino content coverage, large payment ecosystem, scalable multi-market infrastructure, useful affiliate and agent management tools.

- Weaknesses: Platform breadth and configuration options may feel complex for smaller teams; brand recognition is still developing compared to longer-established tier-one providers.

- Ideal For: Operators planning rapid multi-market expansion who need broad content coverage, strong localization, and scalable infrastructure for casino and sportsbook operations.

- Pricing: Custom commercial terms based on platform scope, integrations, content coverage, and regional requirements.

Gamingtec (GT Turnkey)

Gamingtec is an iGaming technology provider delivering a turnkey platform for launching and operating online casinos and sportsbooks. The company focuses on platform flexibility, broad game coverage, integrated payments, and back-office tools designed to streamline operations and support scalable growth.

- Key Features: Turnkey casino and sportsbook platform, large game aggregation library, integrated sportsbook module, CRM and bonus management tools, customizable frontend, multi-currency and multi-language support.

- Licensing: Commonly supports operations under Curaçao licensing and may assist with regulatory setup depending on jurisdiction.

- Strengths: Flexible platform configuration, balanced casino and sportsbook offering, strong user experience focus, relatively fast deployment timelines.

- Weaknesses: Brand recognition is still developing compared to long-established providers; regulatory depth in highly complex markets may vary.

- Ideal For: Operators seeking a modern, adaptable casino and sportsbook platform with a solid feature set and reasonable customization options.

- Pricing: Custom quotes based on platform scope, integrations, licensing requirements, and operational needs.

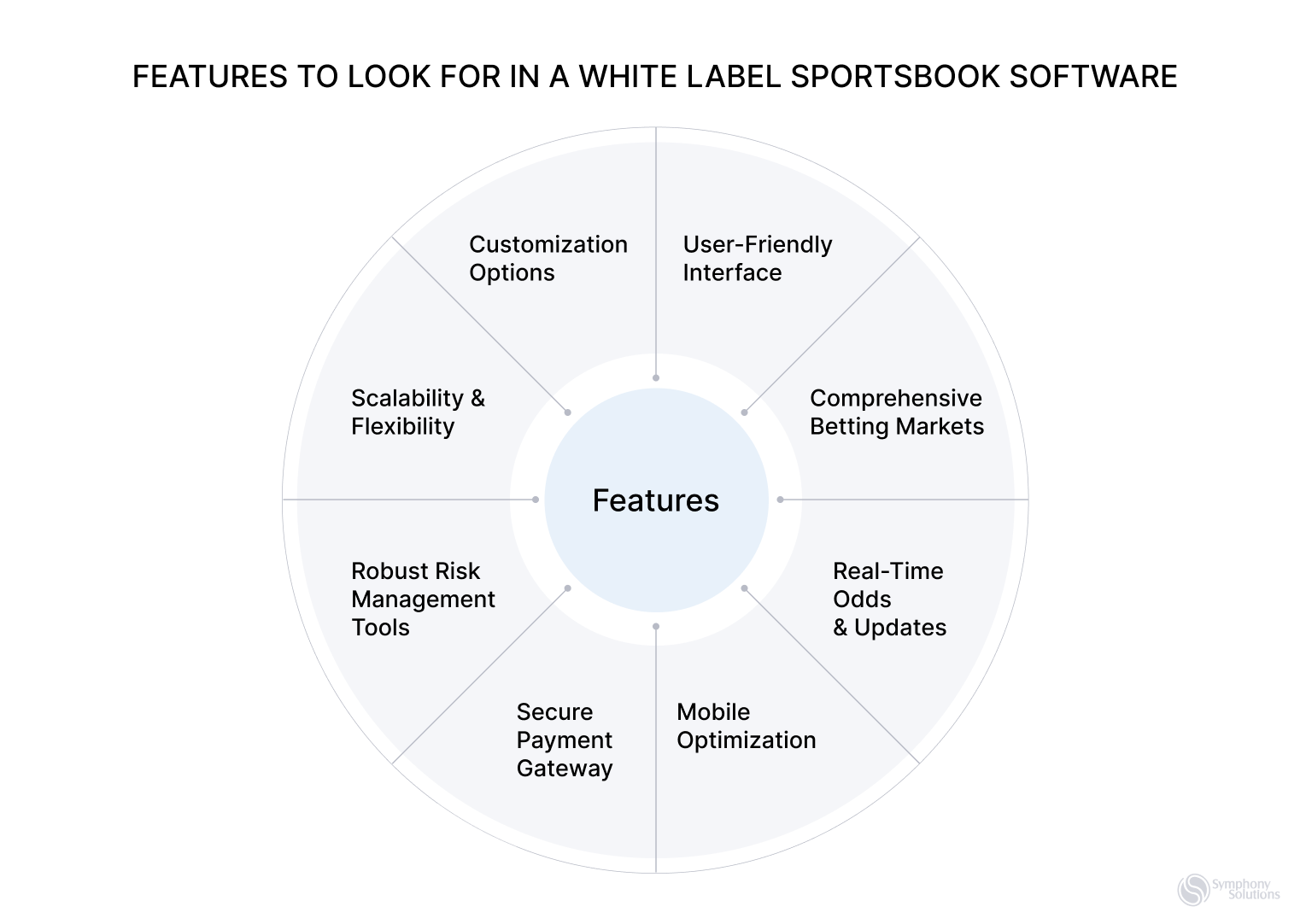

White Label Model

What White Label Actually Means

A turnkey model means the operator runs the business, while the vendor runs most of the technology. The platform usually comes with sportsbook, casino, payments, CRM, hosting, and compliance ready out of the box, letting teams focus on branding, marketing, and growth instead of infrastructure.

Turnkey offers more control than white label, but less freedom than ownership. In practice, it’s a middle ground that prioritizes faster launch, reasonable flexibility, and shared responsibility without full platform ownership.

Unlike a turnkey setup, where the operator receives a fully built platform and then owns and operates it, white label keeps the platform under the vendor’s control. In a turnkey model, the operator manages infrastructure, integrations, licensing strategy, and often the long-term technical roadmap. With white label, those responsibilities stay with the provider, while the operator focuses on branding, marketing, player acquisition, and basic configuration.

White label is the fastest and simplest way to go live, with low upfront effort. The trade-off is higher revenue share, limited customization, and strong vendor dependency, making it best for speed and simplicity rather than deep control or margin optimization.

Why Operators Choose White Label

Operators usually choose white label when they want to launch quickly, minimize operational overhead, or test market demand without committing to heavy upfront investment.

Common motivations include:

- Launching a casino or sportsbook as quickly as possible

- Avoiding the need to manage technology, hosting, and compliance

- Reducing upfront costs and internal technical requirements

- Testing new markets, brands, or acquisition channels

- Running media-led or affiliate-driven brands with minimal infrastructure

- Leveraging vendor-provided licensing or regulatory coverage in certain markets

When used strategically, white label can be an effective way to validate demand, enter new regions, or operate smaller satellite brands.

White Label in Practice: Benefits vs Trade-Offs

| Operator Benefit | What It Enables | What It Requires |

| Fastest launch | Go live in weeks, not months | Limited product and UX control |

| Lowest upfront cost | Minimal initial investment | Higher long-term revenue share |

| Operational simplicity | Provider handles tech and compliance | Strong vendor dependency |

| Reduced regulatory burden | Easier market entry in some regions | Limited control over licensing setup |

| Easy market testing | Quick validation of new brands or GEOs | Migration can be complex later |

Who White Label Is Best Suited For

White label tends to work best for operators who prioritize speed, simplicity, and low upfront risk, especially in early-stage or experimental setups.

| Operator Profile | White Label Fit | Why |

| Early-stage startup testing demand | Strong | Fast launch with minimal investment |

| Media, affiliate, or influencer brand | Strong | Monetize traffic without tech overhead |

| Operator launching a short-term or niche brand | Strong | Quick setup with limited commitment |

| Team with limited technical or operational capacity | Strong | Vendor handles platform complexity |

| Growth-stage operator optimizing margins | Weaker | Revenue share limits profitability |

| Product-led brand seeking differentiation | Weaker | Limited customization and roadmap control, plus competitors use more or less the same product. |

| Operator planning long-term scale or ownership | Poor | Vendor lock-in can constrain future moves |

Top 3 White Label Platform Providers

SoftSwiss

SoftSwiss is a well-established iGaming technology provider delivering a mature white label casino platform designed for scalability and performance. The company is recognized for its stable infrastructure, large-scale game aggregation featuring content from leading studios, a flexible bonus framework, and strong capabilities in cryptocurrency-based gaming. Its in-house game studio, BGaming, adds proprietary titles to its overall content portfolio.

- Key Features: Full-scale casino platform, extensive game content library, crypto-oriented payment support, advanced bonus and promotional engine, affiliate tracking system (Affilka), comprehensive back-office tools.

- Licensing: Solutions are commonly offered under Curaçao or MGA licenses (operators should confirm jurisdictional specifics).

- Strengths: Established market presence, broad game selection, crypto-native functionality, reliable platform performance, feature-rich ecosystem.

- Weaknesses: Entry costs may be higher for smaller or early-stage operators; high platform demand can occasionally affect onboarding timelines.

- Ideal For: Operators looking for a high-end, scalable white label casino solution with strong content depth and cryptocurrency support.

- Pricing: Tailored commercial terms depending on platform scope, licensing, and operational requirements.

EveryMatrix

EveryMatrix is a large B2B iGaming technology provider, with CasinoEngine serving as its flagship casino aggregation and management platform. The solution is widely recognized for its extensive game portfolio, modular architecture, and ability to support both white label deployments and integrations into existing operator stacks.

- Key Features: CasinoEngine game aggregator with thousands of titles, BonusEngine for advanced promotions, GamMatrix for player and gaming management, MoneyMatrix for payment processing, modular platform components, enterprise-grade infrastructure.

- Licensing: Supports operations across multiple regulated markets and can assist with licensing depending on jurisdiction.

- Strengths: Extremely large game library, advanced bonus and gamification capabilities, strong technical foundation, flexible modular design for scaling.

- Weaknesses: Enterprise-oriented structure can make setup more complex and costly; may be excessive for small or simple casino projects.

- Ideal For: Established operators or well-funded businesses seeking a highly scalable casino platform with deep content coverage and advanced tooling.

- Pricing: Enterprise-level pricing, typically based on platform scope and integration complexity; consultation required.

SoftGamings

SoftGamings is an established iGaming platform provider offering a full white label casino solution alongside sportsbook, game aggregation, and payment infrastructure. The company is known for its extensive content library, broad payment coverage, and flexible platform options that support both turnkey launches and API-based integrations.

- Key Features: Turnkey and API-based platform options, 10,000+ games from 200+ providers, loyalty and retention tools, bonus and promotional systems, crypto casino capabilities, multiple licensing pathways.

- Licensing: Can support operators with various licensing frameworks or provide solutions under its own licensing umbrella.

- Strengths: Extremely large game portfolio, wide range of payment integrations, flexible platform structure, strong emphasis on customization and scalability.

- Weaknesses: The breadth of features and configuration options may feel complex for newer operators without structured onboarding or guidance.

- Ideal For: Operators seeking a very large game catalog combined with deep platform customization and flexible deployment models.

- Pricing: Custom commercial proposals based on selected modules, services, and operational scope.

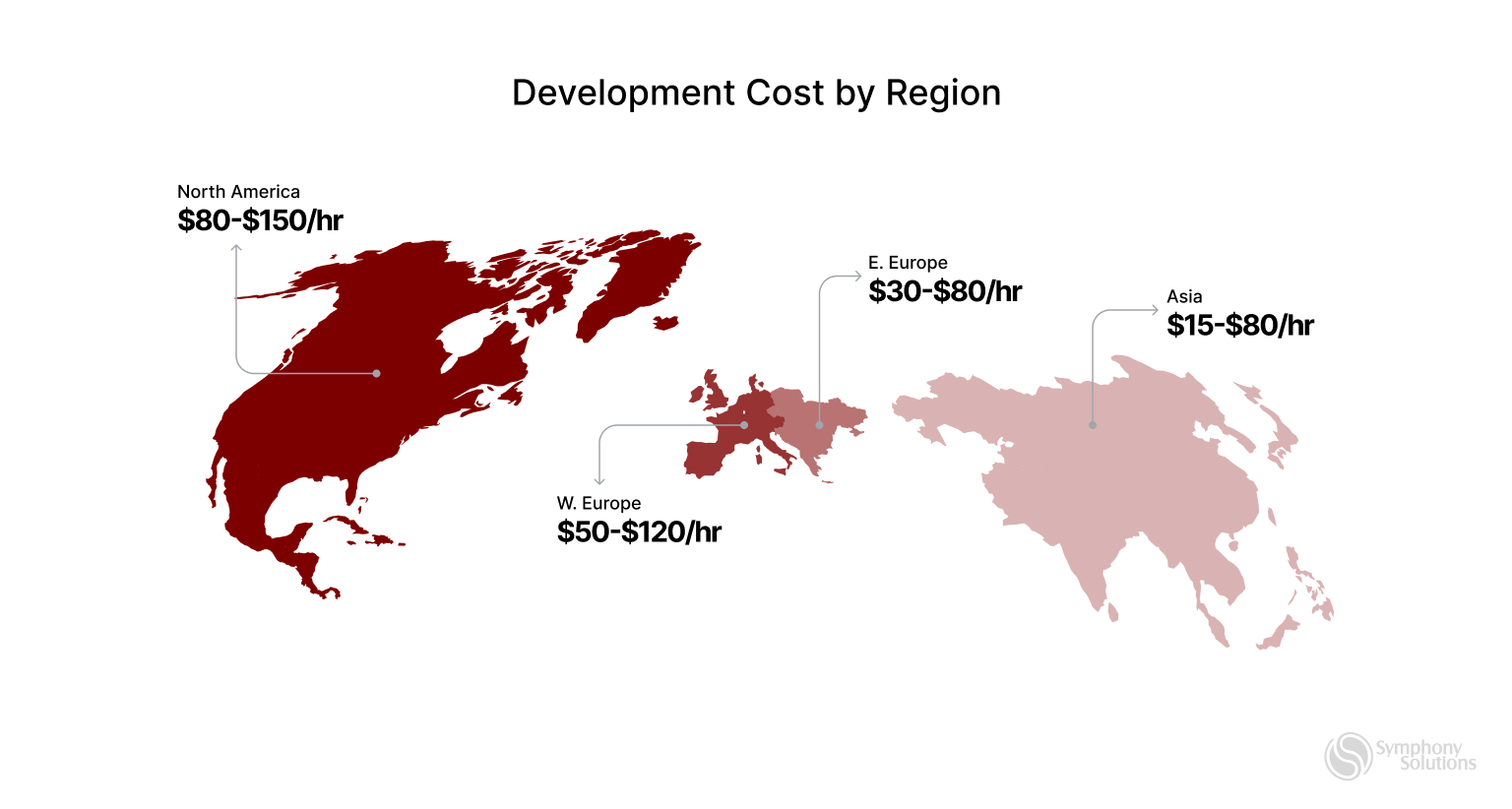

The Cost Conversation Operators Actually Need to Have

When operators ask about platform pricing, they often expect a simple number. In reality, costs are layered and structural.

White label tends to minimize upfront investment but embeds higher long-term revenue share. Turnkey typically combines setup fees, monthly platform costs, integrations, and supplier or sportsbook rev share. Ownership models often reduce ongoing revenue leakage but require higher upfront spend and more internal responsibility.

For operators thinking long term, the key metric isn’t launch cost, it’s marginal cost per additional brand or market, which often determines whether scaling actually increases profitability.

TL;DR

| Model | Best For | Speed to Launch | Control | Vendor Lock-in | Scalability | Example Providers |

| Ownership / Source-Code | Mature operators, margin optimization, differentiation | Slowest (3–6+ months) | Full | Low | Excellent | BetSymphony IQSoft, Quantum Gaming |

| Turn key | Growth-stage operators, multi-market expansion | Fast (2–4 months) | Limited–Moderate | Medium | Good | Soft2Bet, Uplatform, Gamingtec |

| White Label | Fast launch, testing markets, low upfront risk | Fastest (weeks) | Very limited | High | Limited | SoftSwiss, SoftGamings, EveryMatrix (WL) |

The Takeaway

Choosing an iGaming platform is not a technical detail. It is a long-term business decision that shapes margin, speed, control, scalability, and enterprise value. The right model depends on an operator’s revenue stage, internal capabilities, risk tolerance, and strategic priorities, not on vendor promises or feature lists.

White label is best for fast validation and low-risk market entry, but rarely sustainable at scale. Turnkey works well for growth and multi-market expansion, but can introduce dependency and margin pressure over time. Ownership offers the highest level of control and long-term margin potential, but only works when the organization has the operational maturity to manage it.

The most successful operators treat platform economics as a profit lever, not an IT decision. They plan for migration before it becomes urgent, measure long-term margin impact instead of short-term cost, and align platform strategy with where they want the business to be in three to five years.

The best platform is not the one that launches fastest or looks best in a demo. It is the one that supports sustainable profitability, strategic flexibility, and long-term value creation.