Chatbots, a part of the digital landscape since ELIZA‘s introduction in 1966, experienced a significant surge in popularity with the advent of OpenAI’s ChatGPT. This technology is reshaping business-customer interactions and will be the primary customer service channel in about 25% of businesses by 2027. This shift could lead to a reduction in support costs by as much as 30%.

However, as organizations increasingly adopt chatbots to enhance customer interactions, the need for robust testing methods cannot be overemphasized. Chatbot testing is a pivotal step in the development lifecycle, ensuring seamless functionality and reliability of these automated conversational agents.

In this article, we’ll discuss how to test your AI chatbot, shedding light on essential tools and checklists for Testing Chatbot Automation.

Continue reading.

What Is Chatbot Testing?

Chatbot testing is the process of evaluating and verifying a chatbot’s functionality, performance, and reliability. It systematically evaluates the chatbot’s performance to identify and address potential issues before deployment. The goal is to ensure the chatbot can understand user input, provide accurate responses, and seamlessly integrate with other systems, delivering a smooth and user-friendly experience.

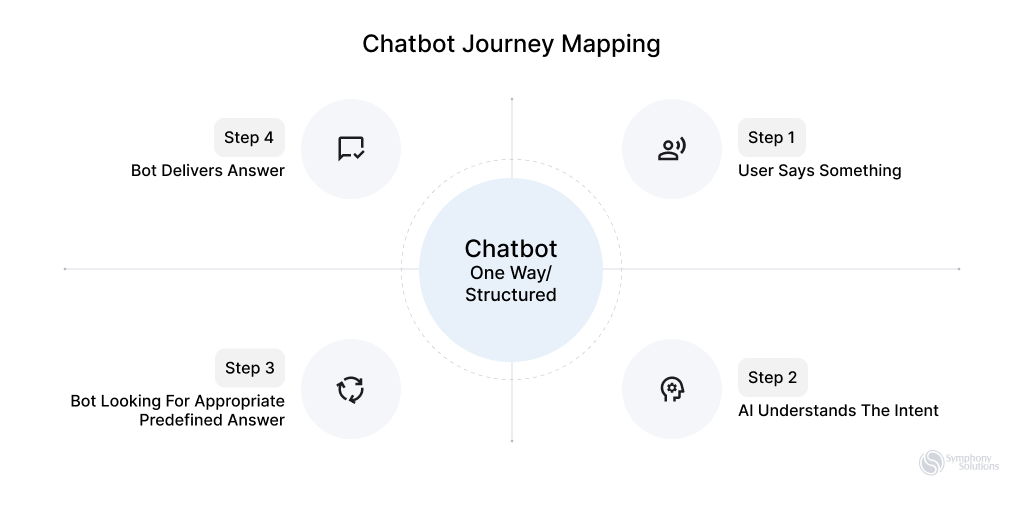

There are two primary types of chatbots: Rule-Based (Scripted) and AI-Powered (Machine Learning) chatbots, each requiring different testing approaches due to their unique technological underpinnings.

Ways to Test Rule-Based Chatbots: These operate on fixed rules and don’t learn from interactions. Testing ensures these rules are correctly implemented and the chatbot responds consistently to set scenarios.

How to Test a Chatbot Powered by AI: These AI chatbots improve through user interactions. Testing assesses their learning algorithms and adaptability, ensuring they accurately refine responses and adapt to evolving user behaviors.

The Importance of Chatbot Testing

Thorough testing is essential for chatbots to provide reliable, and secure experiences, proving crucial in modern digital interactions. This was evident with the launch of Microsoft’s Bing AI in February 2023, where, despite advanced technology, early adopters encountered issues like odd advice and inaccuracies. These challenges highlight why chatbot testing is important.

Additional reasons for Chatbot testing include:

Accuracy and Relevance: Untested chatbots may struggle to understand user input, leading to irrelevant or incorrect responses. This not only affects communication but can also harm a brand’s reputation.

Brand Reputation: Providing inaccurate or offensive information can significantly damage a brand’s image, affecting customer trust and loyalty.

Security Risks: Without proper testing, chatbots may pose security threats. For instance, despite ChatGPT’s reliability, 71% of IT professionals are concerned about its potential misuse for hacking and phishing.

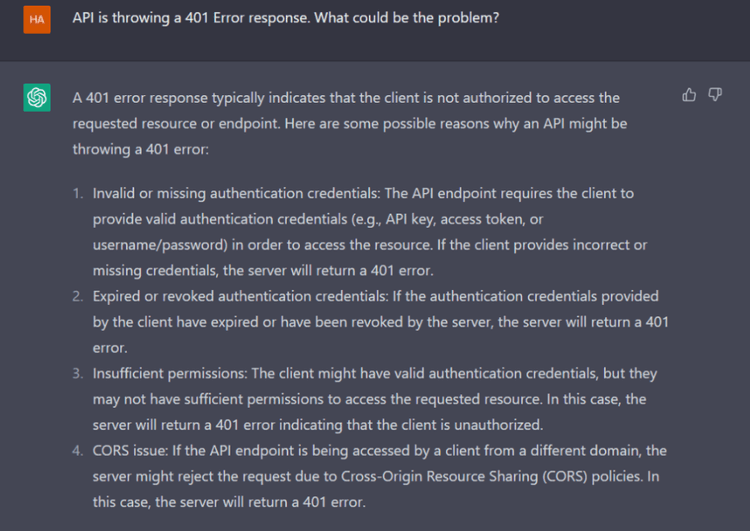

Integration and Compatibility: Untested chatbots can face challenges when integrated with other systems, hindering smooth operations and user experience.

Legal and Regulatory Compliance: Unchecked chatbots risk violating laws and regulations, exposing organizations to compliance issues and potential legal consequences.

Adaptability and Evolution: Proper testing is crucial for chatbots to adapt to evolving user needs and changing business environments, ensuring long-term effectiveness.

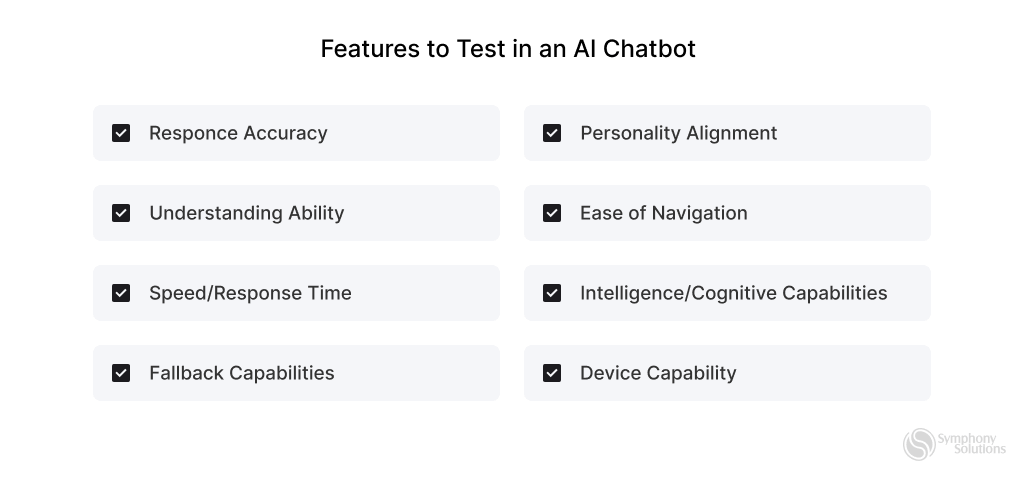

Features to Test in an AI Chatbot

Now that we know the risks associated with deploying untested chatbots, here are some key areas to focus on when testing.

Response Accuracy: Confirm the chatbot’s precision in correctly responding to user queries.

Understanding Ability: Evaluate how well the chatbot comprehends and interprets user input, ensuring accuracy in its responses.

Speed/Response Time: Measure the chatbot’s efficiency by assessing its response time to user interactions.

Fallback Capabilities: Test the chatbot’s ability to handle unexpected queries or errors, ensuring a seamless user experience.

Personality Alignment: Assess the chatbot’s tone and demeanor to ensure it aligns with the ongoing conversation or intended brand image.

Easy Navigation: Validate the chatbot’s user-friendliness and effectiveness in guiding users through conversations.

Intelligence: Evaluate the chatbot’s cognitive capabilities, including learning from interactions and adapting responses over time.

Device Compatibility: Ensure the chatbot operates seamlessly across various platforms and devices, maintaining a consistent user experience.

Explainability: The chatbot should be able to easily explain its decisions, making its processes transparent and building user trust.

Bias: It’s crucial to regularly test and correct the chatbot to avoid any biased responses, ensuring fairness for all users.

Scalability: As user numbers grow, the chatbot must remain fast and accurate, handling more interactions without a dip in performance.

Security: Enhance the chatbot’s security measures to safeguard against cyber threats, ensuring all user data is kept private and secure.

Types of Chatbot Testing

Initiating tests at different stages of AI chatbot development is crucial for ensuring a robust and effective conversational agent. Here are the key stages for running tests:

Pre-launch Chatbot Testing

General Testing: Assess basic functionality, like salutations, welcome messages, and questions, to ensure accurate understanding and responses across diverse scenarios.

Domain Testing: Evaluate proficiency in specific areas, e.g., customer support improvement, ensuring the chatbot handles queries within its domain effectively.

Limit Testing: Push the chatbot’s limits to determine its threshold for handling high volumes or complex conversations, ensuring stability during peak times.

Post-launch Chatbot Testing

A/B Testing: Compare versions to identify the most effective and user-friendly options, gathering insights to enhance overall performance.

Conversational Factors: Focus on improving the chatbot’s ability to engage in natural and empathetic conversations, refining tone, language, and context.

Visual Factors: Ensure an intuitive, visually appealing interface for a user-friendly experience, enhancing the overall post-launch user interaction.

RPA Testing (Robotic Process Automation): RPA testing evaluates the chatbot’s automation capabilities, ensuring seamless execution of routine tasks. This enhances efficiency and reliability, contributing to error-free chatbot services.

User Acceptance Testing (UAT): UAT involves end-users testing the chatbot in real-world scenarios to ensure alignment with their needs. It provides insights into user satisfaction, identifies usability issues, and ensures user-friendly and effective service.

Security Testing: Security testing assesses the chatbot’s resistance to vulnerabilities, safeguarding user data and ensuring compliance. This enhances overall security, instilling user trust and ensuring a robust and reliable service.

Adhoc Testing: Adhoc testing involves unplanned and exploratory testing to identify unforeseen issues. This comprehensive evaluation contributes to a more resilient and reliable chatbot service.

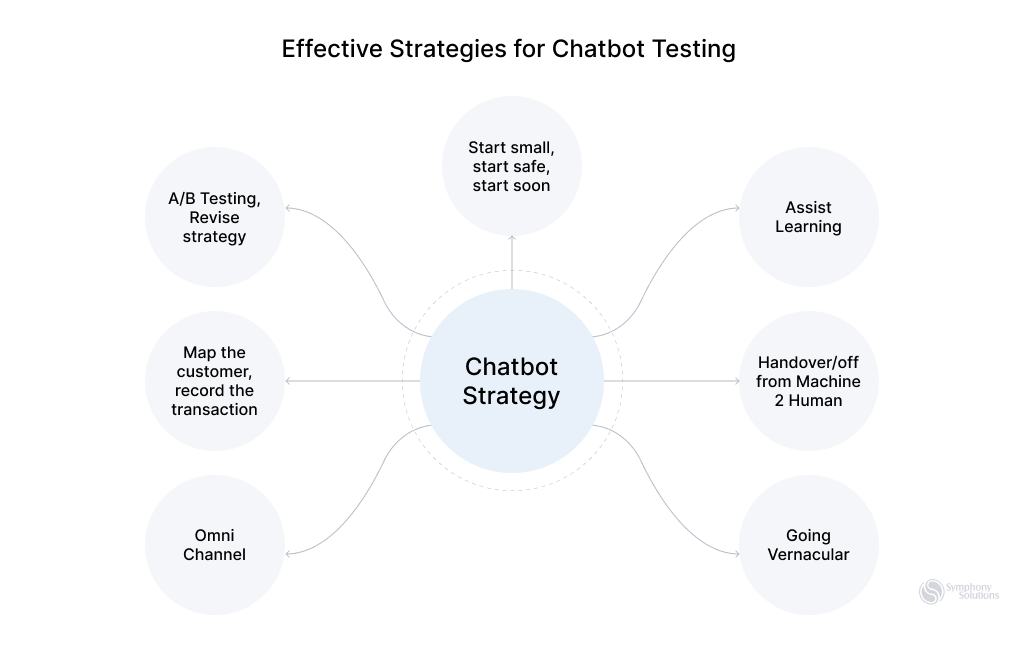

Effective Strategies for Chatbot Testing

Here are some effective testing strategies to ensure chatbots function properly, provide accurate responses, and deliver a positive user experience.

Step 1: Requirements Gathering

This process involves identifying and understanding the specific functionalities and user interactions the chatbot is expected to perform. It sets the foundation for creating a focused and relevant testing plan, ensuring that the chatbot meets its intended purposes and operates effectively in its designated environment.

Step 2: Comprehensive Planning

Begin with thorough planning, defining the scope of testing. Identify key features like responsiveness, speed, and accuracy to maximize the testing process.

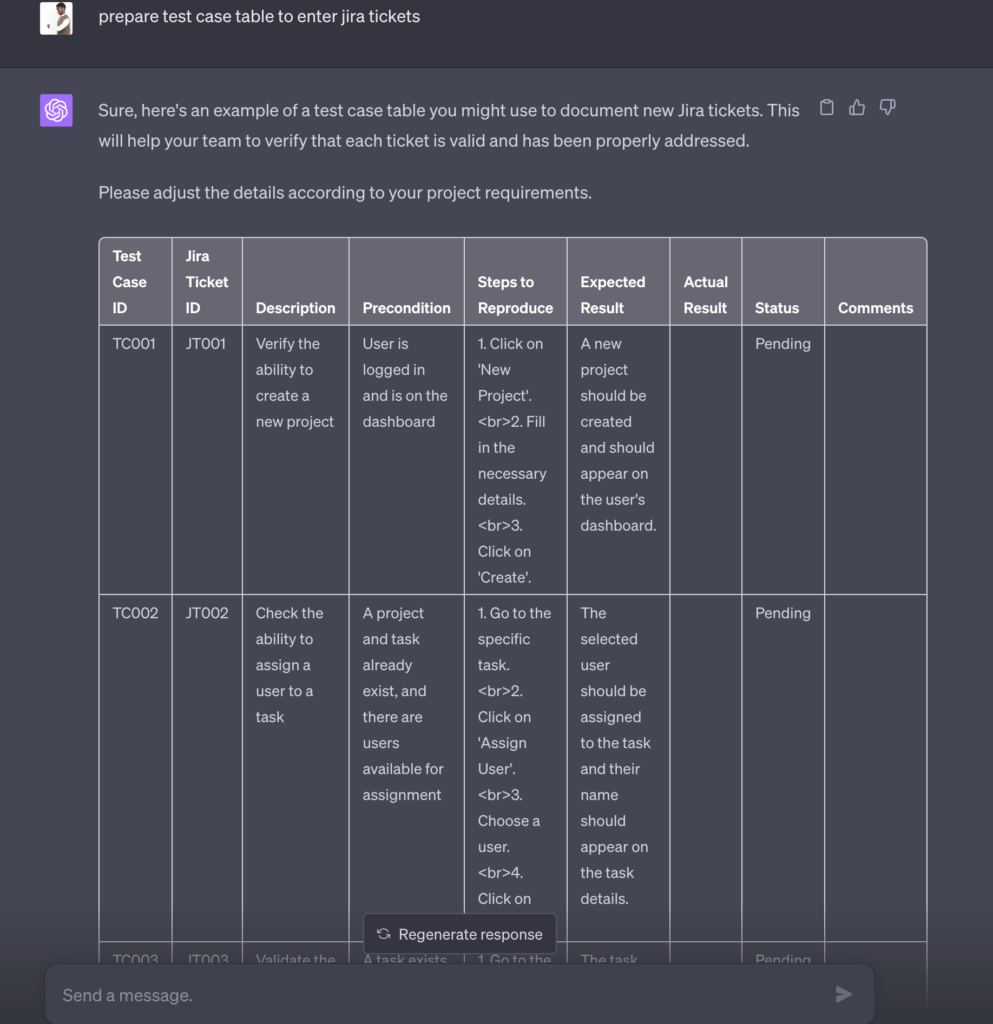

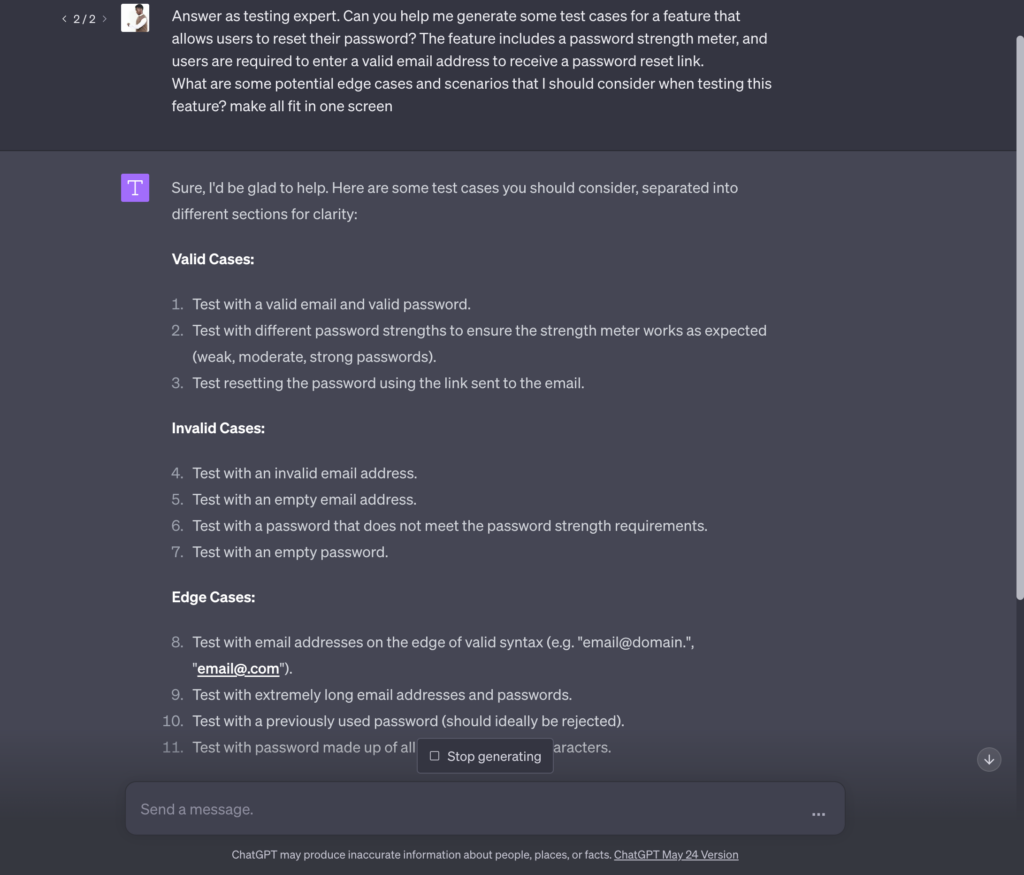

Step 3: Test Case Design

Develop effective test cases covering various scenarios, accommodating user intent variations and potential errors. Comprehensive test cases identify and rectify chatbot functionality weaknesses.

Step 4: Integration with Real User Scenarios

Integrate the chatbot with real user scenarios to create a realistic testing environment. Putting the chatbot into live scenarios, such as an app or webpage, where users interact will uncover functional, non-functional, and integration issues, allowing focused resolution for a user-centric experience.

Step 5: Performance Testing

Conduct performance testing to ensure seamless chatbot performance. Assess responsiveness under different loads and stress conditions, gauging scalability and response times.

Step 6: Natural Language Processing (NLP) Evaluation

Evaluate the chatbot’s language comprehension abilities, especially in interpreting ambiguous or complex user queries. Verify multilingual capabilities for a smooth conversational experience worldwide.

Step 7: Continuous Testing and Feedback

Implement a continuous testing and feedback loop post-deployment—user and beta tester feedback guide constant improvements, addressing potential issues for ongoing chatbot evolution.

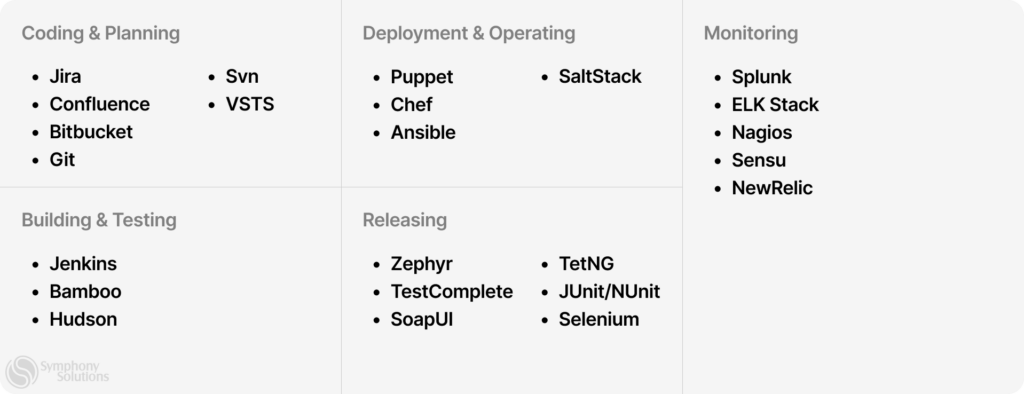

Utilizing Testing Tools

Testing tools are one of the best ways to verify that your chatbot is up to standard. These tools come built-in with special functionalities that allow you to check the effectiveness of your chatbot under multiple scenarios. Some of these tools are low code, allowing QA teams to test their chatbots without coding experience.

Features of testing tools

- Automated test script and case creation.

- Testing across multiple channels (web chat, messaging apps, voice).

- Support for testing across different browsers and devices.

- Scalability testing with simulated user loads.

- Detailed test reports and analytics generation.

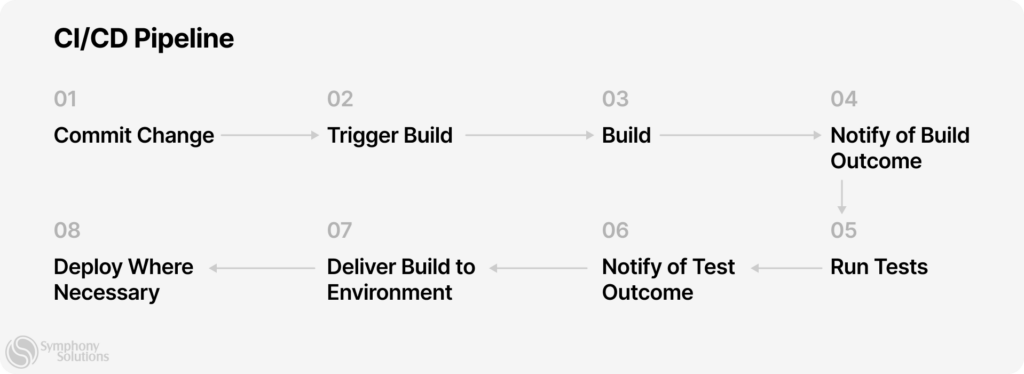

- Integration with CI/CD pipelines.

- Security testing measures.

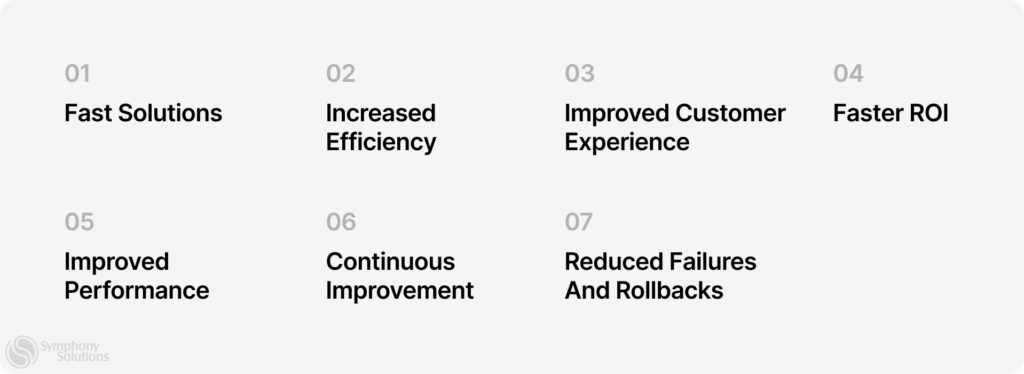

Benefits of using testing tools

- Saves time and effort.

- Identifies performance bottlenecks easily

- Can cover a wide range of scenarios.

- Provides performance insights for continuous improvement.

- Reduces chances of human error.

- Supports validation under different conditions.

- Accelerates time to market.

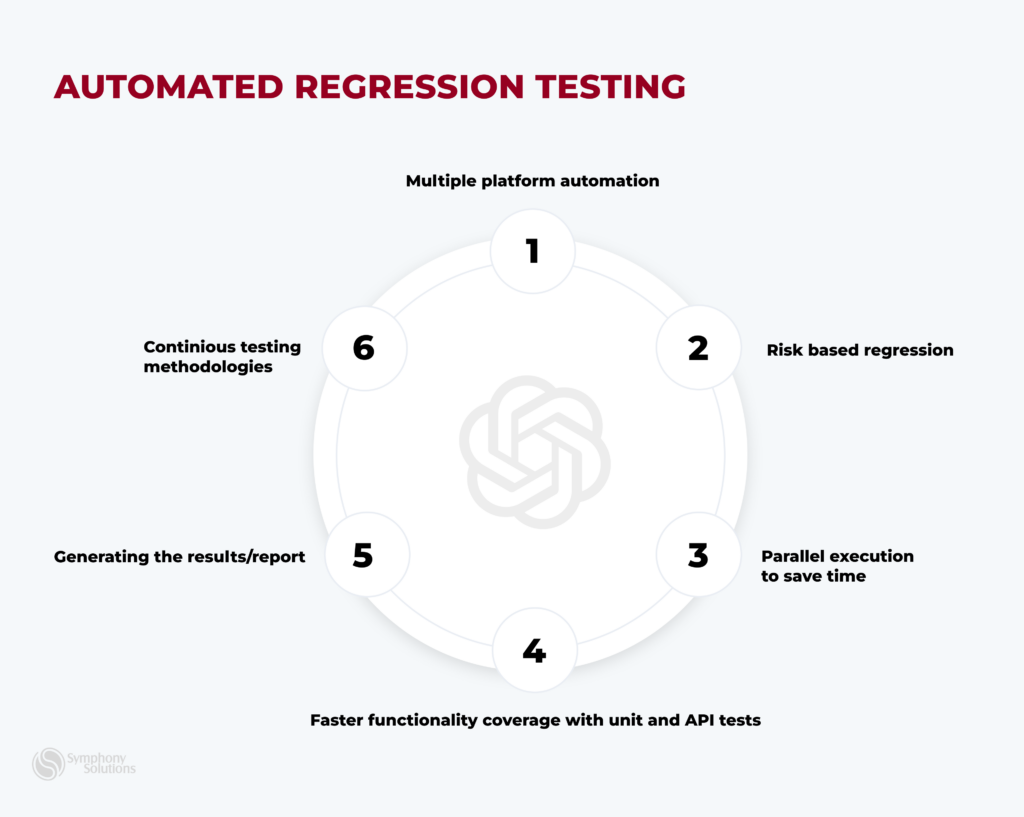

Running A/B Tests

Running A/B tests with multiple scenarios is another effective way to validate AI chatbots.

A/B testing allows developers to experiment with different versions of the chatbot to identify which performs better. This iterative testing process helps optimize the chatbot’s design, functionality, and overall user experience.

Here are some best practices for effective A/B testing:

- Clearly outline the goals of your A/B test.

- Test one variable at a time to accurately identify the impact of specific changes. This might include testing different greetings, response options, or conversation pathways.

- Ensure a fair comparison by randomly assigning users to different chatbot versions. This helps control for biases and external factors that could skew results.

- Gather a sufficiently large sample size to ensure statistical significance. A smaller sample may not provide reliable insights, leading to inaccurate conclusions.

- Track relevant metrics, such as activation rate, fallback rate, retention rate, self-service rate, confusion triggers, etc. This data will help assess the performance of each chatbot version against the defined objectives.

- Analyze the results, identify patterns, and iterate on the chatbot design accordingly.

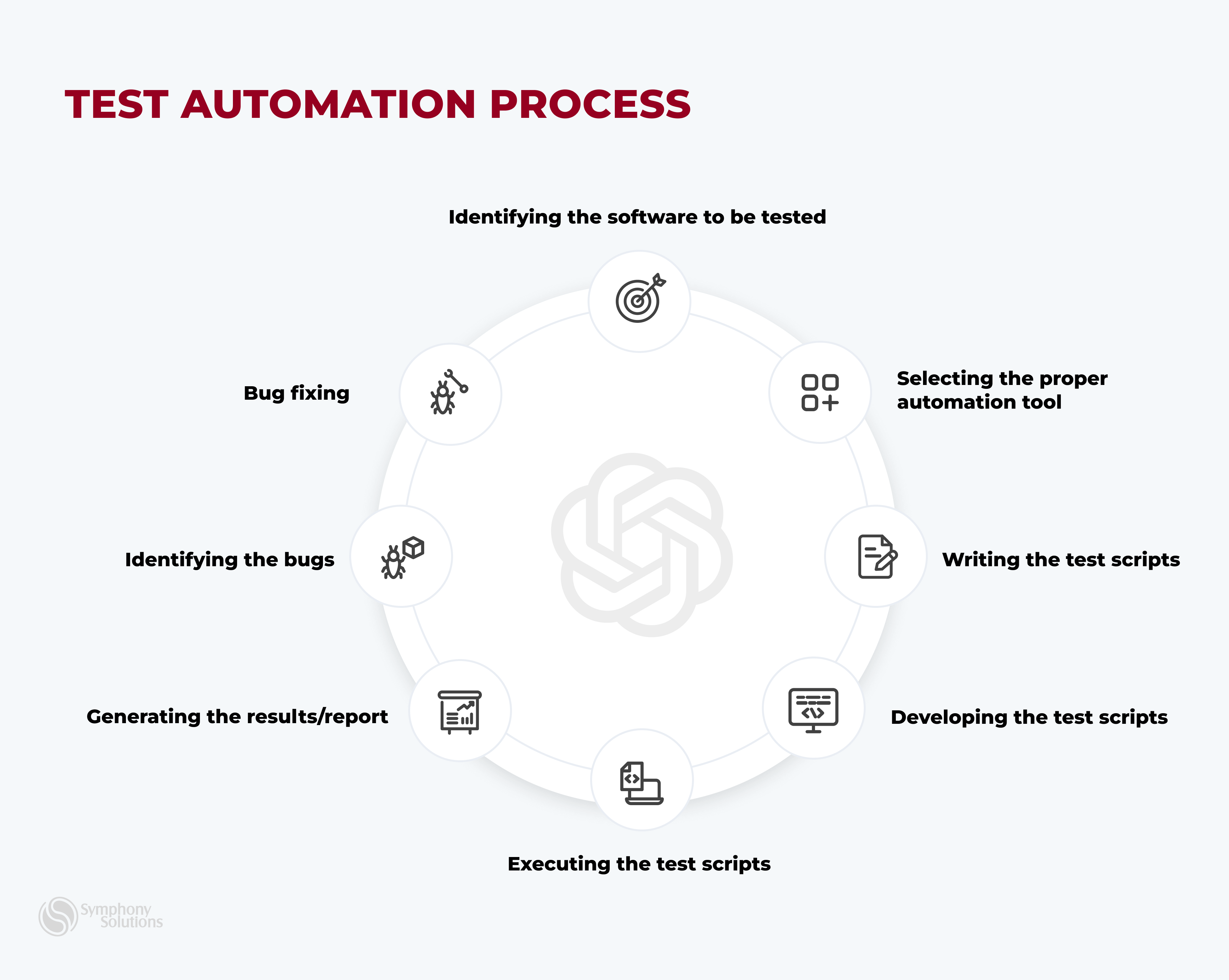

Automation in Chatbot Testing

Understanding when to implement chatbot testing automation is essential for optimizing efficiency. Consider automating your testing process in the following scenarios:

Post-Manual Testing Stability: Automation after manual testing has confirmed the chatbot’s stability, particularly for repetitive tasks and systematic processes.

Efficiency in Repetitive Tasks: Implement automation to enhance efficiency and reduce human error, especially when dealing with repetitive scenarios or large data volumes.

Frequent Updates: When your chatbot undergoes regular updates, automate testing to validate new features while ensuring existing functionalities remain intact quickly.

Increasing Complexity: As your chatbot becomes more complex, automate testing for intricate dialogue flows and backend integrations where manual testing may need to be improved.

Cost-Benefit Analysis: Automate if the benefits – such as time savings, broader coverage, faster deployment, and improved software quality – outweigh the costs.

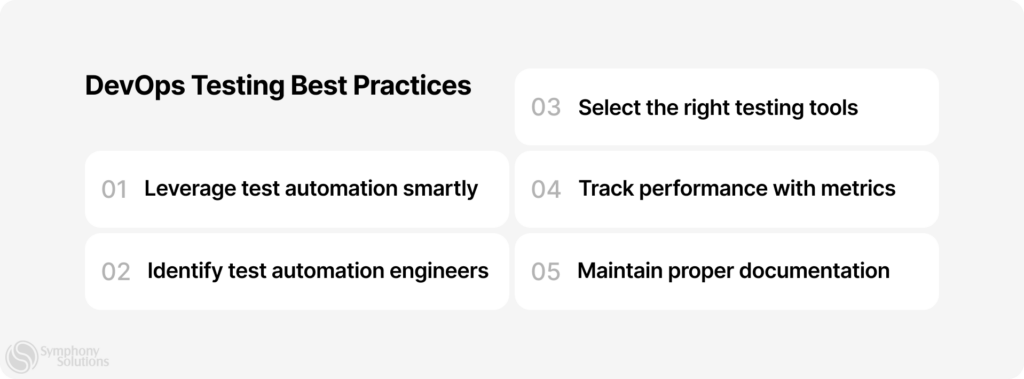

When deciding to automate, selecting the right tool is crucial. Use the following criteria for your selection:

- Compatibility: Choose a tool that aligns with your chatbot platform and tech stack.

- User-Friendly Interface: Opt for an easy tool for developers and testers.

- Integration Capabilities: Ensure the tool integrates well with your CI/CD pipeline and version control systems.

- Cross-Platform Testing: The tool should support consistent performance across various devices.

- Reporting and Analytics: Select a tool with robust reporting features for better decision-making.

- Budget Considerations: Make sure the cost of the tool fits within your project budget.

Conclusion

Testing your AI chatbot is crucial to ensure it functions accurately and reliably before deploying it. By rigorously evaluating response accuracy, understanding, and adaptability, you can iron out potential issues, enhance functionality, and instill confidence in end users.

While this guide has covered the nitty-gritty of how to test your AI chatbot, it is best to work with experts to ensure that you get the best results, unless you have a qualified in-house team. For top-tier professional assistance and access to advanced testing tools and methodologies, consider exploring Symphony Solutions’ Software testing and QA services to learn more about how we can elevate your chatbot’s performance to the highest standards.